Building AI-Driven Product Teams in AgenticOps

In an AI-Driven Product environment, success is rooted in continuous improvement and guided by five core principles:

- Clarity in communication ensures agents and operators understand what to deliver and why.

- Strategic and tactical alignment in task execution connects high-level goals with day-to-day work.

- Observability in performance enables continuous measurement, learning, and improvement.

- Explainability ensures we can interpret and trust deliverables.

- Consistency in deliverables builds client trust and enhances value of deliverables.

The journey for AI-Driven Product Teams progresses through three layers of maturity towards AgenticOps: AI-Assisted Development, Agent Development, and Agentic Delivery.

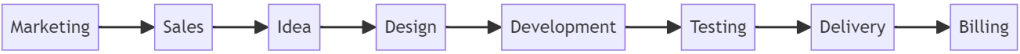

By Product Team, I mean a team that delivers a digital, data, AI, or IoT product. This product requires design and writing code.

1. AI-Assisted Development

This foundational stage focuses on training both agents and operators. The operator collaborates with their agent assistants by crafting precise prompts to direct workflows, break work items into actionable steps, and improve deliverables.

Agent Role

- Act as specialized junior team members (e.g., marketer, developer, QA analyst, DevOps engineer, data scientist).

- Execute prompts and produce deliverables for operator review.

Operator Role

- Maintain control over workflows and agent task assignment, ensuring clarity in prompts and alignment with strategic and tactical goals.

- Measure performance based on value-added time and deliverable ratings, reviews, and scores (e.g., stars, thumbs up/down, percentages).

- Collaborate with the team to refine and solidify agent prompts, data, fine-tuning, training, workflows, policies, and templates.

Goals

- Train operators and agents to deliver high-value deliverables with consistency and precision.

- Build confidence in agent outputs by ensuring explainability of results and observability in performance.

- Lay the foundation for continuous improvement through feedback and measurable progress.

Outcome

AI-Assisted Development serves as the training ground, where agents learn and improve while operators refine their ability to prompt, evaluate, and lead agents.

2. Agent Development

At this stage, agents gain more autonomy, handling complete work items while maintaining alignment with operator-defined criteria. They focus on delivering high-value deliverables efficiently and improving their ratings, reviews, and scores.

Agent Role

- Execute work items independently, adhering to prompts and defined workflows.

- Strive for explainability in deliverables to build operator trust.

- Actively improve through operator feedback, targeting higher ratings, reviews, and better scores.

Operator Role

- Shift from managing tasks to guiding agents and evaluating outcomes.

- Monitor and analyze performance metrics (e.g., flow time, throughput, and value-added time).

- Collaborate with the team to optimize workflows, policies, and templates.

Goals

- Deliver predictable, high-value outputs while minimizing operator intervention.

- Link speed to cost and value, optimizing workflows for value, efficiency, and profitability.

- Foster a system of continuous improvement based on measurable feedback.

Outcome

Agent Development prepares agents for full autonomy by ensuring they consistently meet or exceed expectations in value, quality, and speed.

3. Agentic Delivery

In this stage, agents achieve the agentic state with full autonomy. They independently manage work items from a queue, delivering high-value deliverables aligned with strategic goals, with minimal operator oversight.

Agent Role

- Own the entire lifecycle of a work item, from planning to execution and delivery.

- Ensure deliverables are explainable and align with strategic and tactical objectives.

- Continuously improve performance by adapting to feedback and refining workflows.

Operator Role

- Define high-level goals, vision, and success criteria.

- Monitor performance metrics and provide directional guidance only when necessary.

- Focus on innovation and strategy while refining policies and templates to scale operations.

Goals

- Achieve consistent, predictable, and explainable high-value deliverables.

- Scale operations efficiently, reducing reliance on human intervention.

- Build a self-sustaining system of Agentic Ops that continuously improves.

Outcome

Agentic Delivery transforms agents into trusted, autonomous team members capable of delivering measurable value at scale. Operators focus on strategic priorities while agents handle execution.

Continuous Improvement and Explainability

The path from AI-Assisted Development to Agentic Delivery is defined by continuous improvement and explainability. Agents are motivated to enhance their deliverables by earning higher ratings, reviews, and scores, while operators ensure clarity and alignment through refined workflows and templates.

By observing and explaining performance, operators and teams build trust in agent outputs. This system fosters a reliable, scalable process where agents evolve into autonomous contributors, consistently delivering high-value deliverables with measurable impact.

This is a lot easier said than done and there are many devils in the details, but this provides a framework to achieve Agentic Ops.

Where are you in your journey with AI Agents? I’m here if you want to talk more about taking your first step or stepping into the agentic state.