Governing Agent Boundaries in .NET. Not Agents.

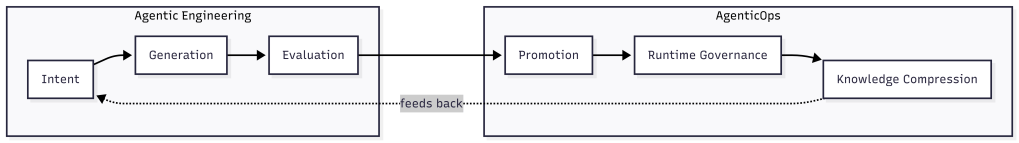

Post 9 of the AgenticOps series argued that agent sprawl governance starts at the boundary, not the agent. This post implements that claim in a .NET stack: C#, Microsoft Agent Framework, ML.NET, PostgreSQL, and Vue.js.

—

The Problem

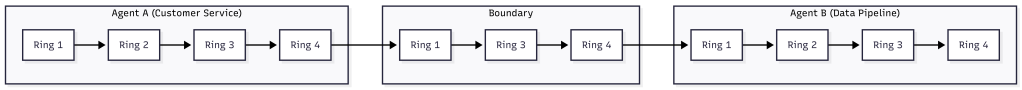

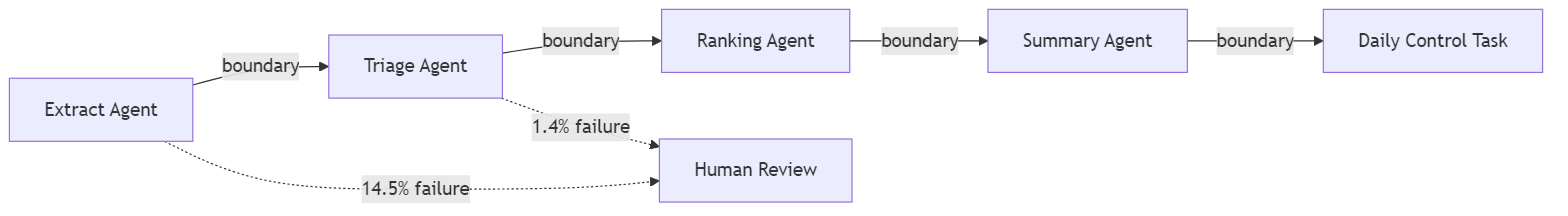

A .NET platform grows agents organically. A triage agent classifies inbound work items. A ranking agent scores them by priority. A summarization agent compresses context for the daily control task. An extraction agent pulls candidate work items from external signals. Each agent is individually reasonable. Same team, same framework, same infrastructure. And none of them have governed boundaries between them.

The triage agent writes a classification. The ranking agent reads it. What validates the handoff? Nothing. Ranking trusts whatever triage wrote. If triage hallucinates a category that does not exist in the scoring model, ranking silently produces garbage scores. The failure is invisible because both agents completed successfully. The boundary between them had no ring.

This compounds with every agent added. Five agents with four boundaries and internal governance is not five governed agents. It is an ungoverned system that happens to have five well-scoped components.

—

Why It Breaks

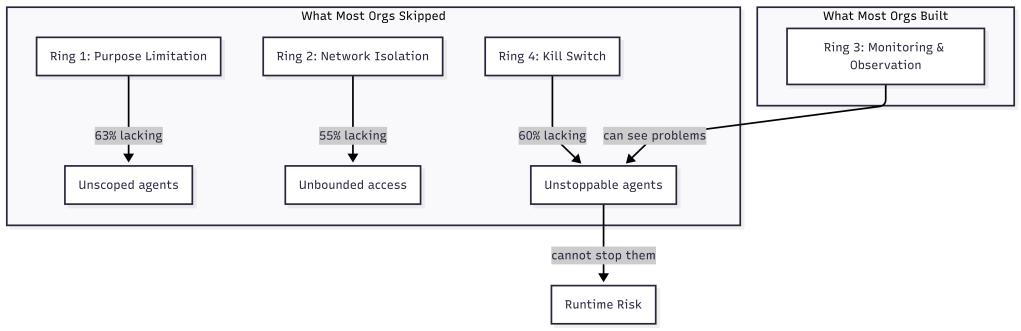

Microsoft Agent Framework makes it easy to define agents with clean internal governance. The AgentChat orchestration model handles turn-taking, tool invocation, and termination conditions. The framework governs the interior of each agent. It says nothing about what happens between agents when one agent’s output becomes another agent’s input outside of a chat.

In a typical .NET implementation, the handoff looks like this.

// Triage agent writes result to the databasevar classification = await triageAgent.InvokeAsync(workItem);await db.WorkItems.UpdateClassification(workItem.Id, classification);// Ranking agent reads the result and scoresvar ranked = await rankingAgent.InvokeAsync(workItem);await db.WorkItems.UpdateScore(workItem.Id, ranked.Score);

Both agents are governed internally. Triage has scoped tools and a defined prompt. Ranking has its own tool set and scoring model. But the handoff, the moment classification leaves triage and enters ranking, is raw. No schema validation. No ring. No gate. If the triage agent returns an unexpected classification, the ranking agent consumes it without complaint.

Agent frameworks govern agent interiors. Boundary governance is the developer’s responsibility. When nobody builds it, the boundaries are open by default. Each new agent adds new boundaries. The governance gap grows with the agent count.

ML.NET adds a second dimension. A trained model that scores work item priority is deterministic given its inputs. But when those inputs come from an upstream stochastic agent, the deterministic model inherits the upstream variance. Garbage classification in, confidently wrong score out. The ML.NET model cannot tell you its inputs were hallucinated. It will score them with the same confidence as valid inputs.

This Looks Like RPA Orchestration. It Is Not.

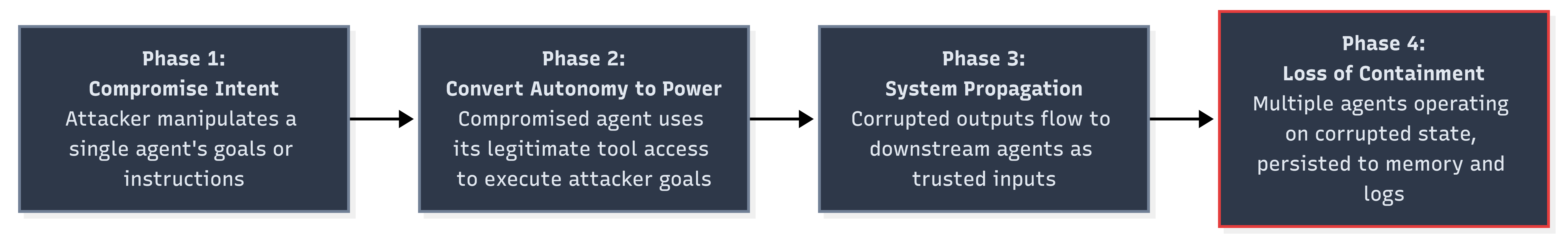

The pattern of contract, validate, route is decades old. ESBs enforced message schemas between services. RPA orchestration platforms validated handoffs between bots. API gateways check request payloads against OpenAPI specs. If the fix is “validate data at the boundary,” enterprise middleware solved this twenty years ago. So what is different?

The difference is what crosses the boundary.

An RPA bot is deterministic. Bot A always returns the same shape with the same value space. If the schema passes, the content is correct. The bot does not invent new categories. It does not return a structurally valid payload containing a value it fabricated. Schema validation is sufficient because the output space is closed. Every possible output is known at design time.

An AI agent is stochastic. The triage agent can return a structurally valid JSON object with a category field that contains a value no one anticipated. The schema passes. The JSON is well-formed. The category is a string. But the string is “enhancement” and the downstream scoring model has never seen that value. Schema validation caught nothing because the violation is semantic, not structural.

This is why the boundary contract checks three things instead of one. Structure: does the payload match the schema? Domain: is the content within the known value space? Confidence: does the source agent trust its own output enough to skip human review? RPA boundaries only needed the first check. Agent boundaries need all three because the output space is open.

The confidence check is the sharpest difference. RPA bots do not have confidence scores because they do not make probabilistic decisions. They execute scripts. An AI agent that classifies a work item with 0.52 confidence is telling you it is nearly guessing. That signal exists at the boundary and nowhere else. If you do not check it there, the downstream system consumes a guess as a fact.

The infrastructure pattern is old. The failure mode it defends against is new. Deterministic boundaries protect against malformed data. Stochastic boundaries protect against plausible hallucinations. The plumbing looks the same. The threat model is fundamentally different.

—

The Fix

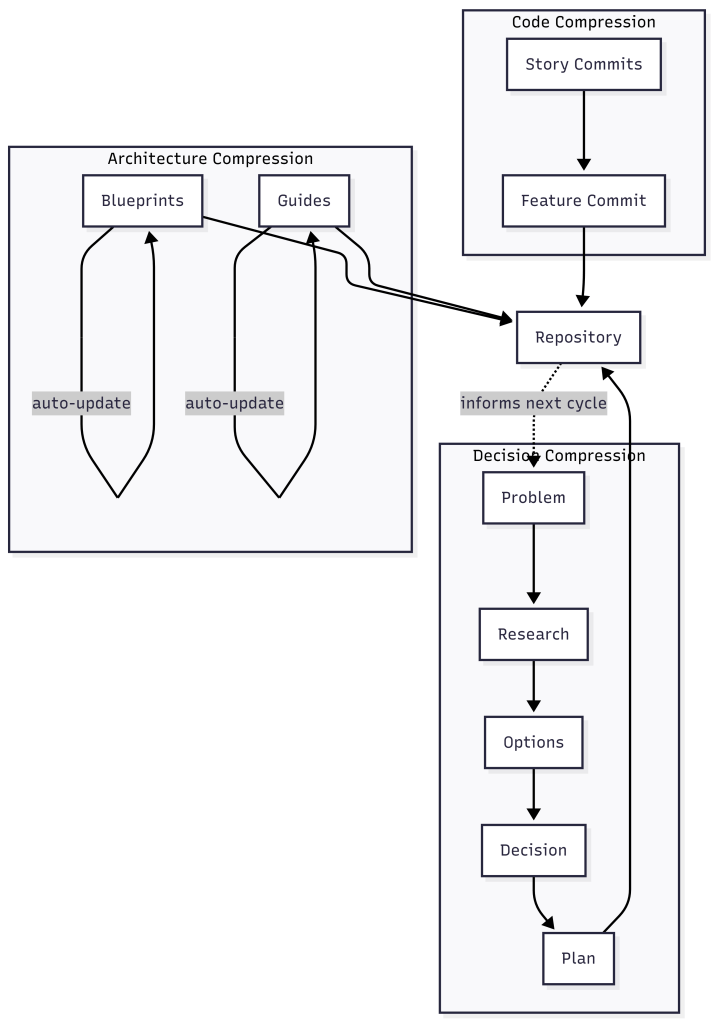

The fix is a boundary contract enforced at every handoff between agents. The contract checks structure, domain, and confidence. In .NET, this is an interface and a middleware pattern.

The Boundary Contract

Every agent-to-agent handoff passes through a boundary. A boundary has a schema, a validator, and a log entry.

public interface IBoundaryContract<T>{ string SourceAgent { get; } string TargetAgent { get; } JsonSchema Schema { get; } BoundaryResult<T> Validate(T payload);}public record BoundaryResult<T>( bool IsValid, T Payload, string[] Violations, DateTimeOffset Timestamp, string SourceAgent, string TargetAgent);

The schema is not optional and not advisory. It is a JSON Schema definition that the payload must satisfy before the target agent receives it. The validator checks structural compliance, domain constraints, and known invalid states.

public class TriageToRankingContract : IBoundaryContract<WorkItemClassification>{ public string SourceAgent => "triage-agent"; public string TargetAgent => "ranking-agent"; public JsonSchema Schema => WorkItemClassification.JsonSchema; public BoundaryResult<WorkItemClassification> Validate( WorkItemClassification payload) { var violations = new List<string>(); if (!KnownCategories.Contains(payload.Category)) violations.Add( $"Unknown category '{payload.Category}'. " + $"Valid: {string.Join(", ", KnownCategories)}"); if (payload.Confidence < 0.0 || payload.Confidence > 1.0) violations.Add( $"Confidence {payload.Confidence} outside [0.0, 1.0]"); if (payload.Confidence < MinConfidenceThreshold) violations.Add( $"Confidence {payload.Confidence} below threshold " + $"{MinConfidenceThreshold}. Requires human review."); return new BoundaryResult<WorkItemClassification>( IsValid: violations.Count == 0, Payload: payload, Violations: violations.ToArray(), Timestamp: DateTimeOffset.UtcNow, SourceAgent: SourceAgent, TargetAgent: TargetAgent ); } private static readonly HashSet<string> KnownCategories = new() { "bug", "feature", "chore", "spike", "incident" }; private const double MinConfidenceThreshold = 0.6;}

A HashSet may be questionable compared with another type like Enum, but that besides the point. The validator catches two failure modes. First, structural violations where the triage agent returns a category the scoring model does not recognize. Second, confidence violations where the agent classified the work item but with low confidence. Low confidence means the classification should route to a human instead of flowing automatically to ranking.

The Boundary Middleware

The handoff code changes from a direct call to a governed crossing.

public class BoundaryGate<T>{ private readonly IBoundaryContract<T> _contract; private readonly IBoundaryLog _log; public BoundaryGate(IBoundaryContract<T> contract, IBoundaryLog log) { _contract = contract; _log = log; } public async Task<BoundaryResult<T>> CrossAsync(T payload) { var result = _contract.Validate(payload); await _log.RecordCrossingAsync(new BoundaryCrossing { SourceAgent = result.SourceAgent, TargetAgent = result.TargetAgent, Timestamp = result.Timestamp, IsValid = result.IsValid, Violations = result.Violations, PayloadHash = ComputeHash(payload) }); return result; }}

The calling code now looks like this.

var classification = await triageAgent.InvokeAsync(workItem);var gate = new BoundaryGate<WorkItemClassification>( new TriageToRankingContract(), boundaryLog);var crossing = await gate.CrossAsync(classification);if (!crossing.IsValid){ await humanReviewQueue.EnqueueAsync(workItem, crossing.Violations); return;}await db.WorkItems.UpdateClassification(workItem.Id, crossing.Payload);var ranked = await rankingAgent.InvokeAsync(workItem);

Invalid crossings route to a human review queue instead of silently propagating. The ranking agent never sees input that failed the boundary contract. Ring 1, constrain inputs, is now structural at the handoff.

The payload’s hash as Identity for the payload was distracting because I worried about uniqueness, but its besides the point.

There is so much to think about here, but even this is better than nothing.

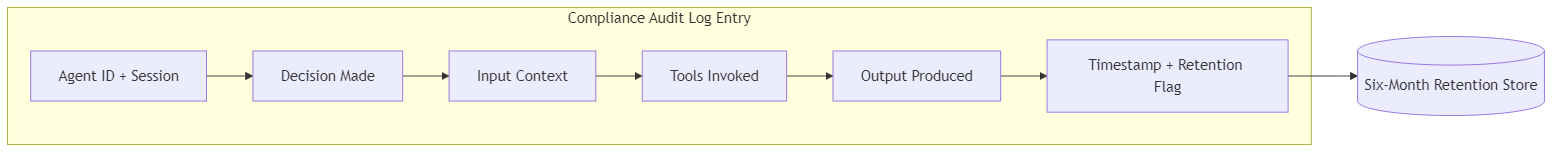

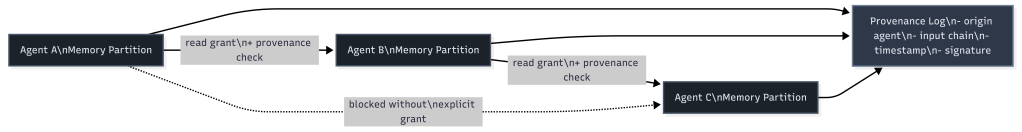

The Boundary Log in PostgreSQL

Every crossing is recorded. Valid and invalid. The log serves two purposes: operational debugging and governance audit.

CREATE TABLE boundary_crossings ( id BIGINT GENERATED ALWAYS AS IDENTITY PRIMARY KEY, source_agent TEXT NOT NULL, target_agent TEXT NOT NULL, crossed_at TIMESTAMPTZ NOT NULL DEFAULT now(), is_valid BOOLEAN NOT NULL, violations TEXT[], payload_hash TEXT NOT NULL, session_id UUID);CREATE INDEX ix_crossings_agents ON boundary_crossings (source_agent, target_agent, crossed_at);CREATE INDEX ix_crossings_invalid ON boundary_crossings (crossed_at) WHERE NOT is_valid;

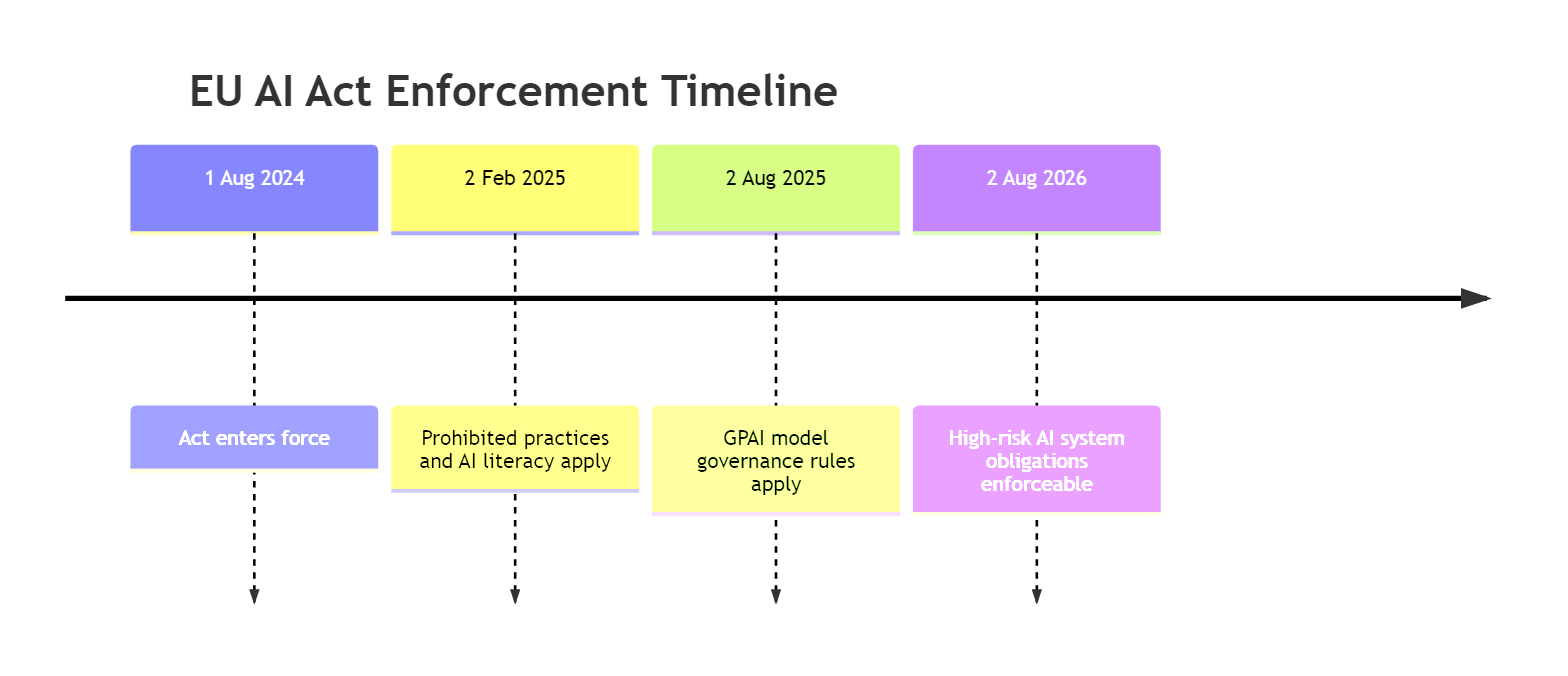

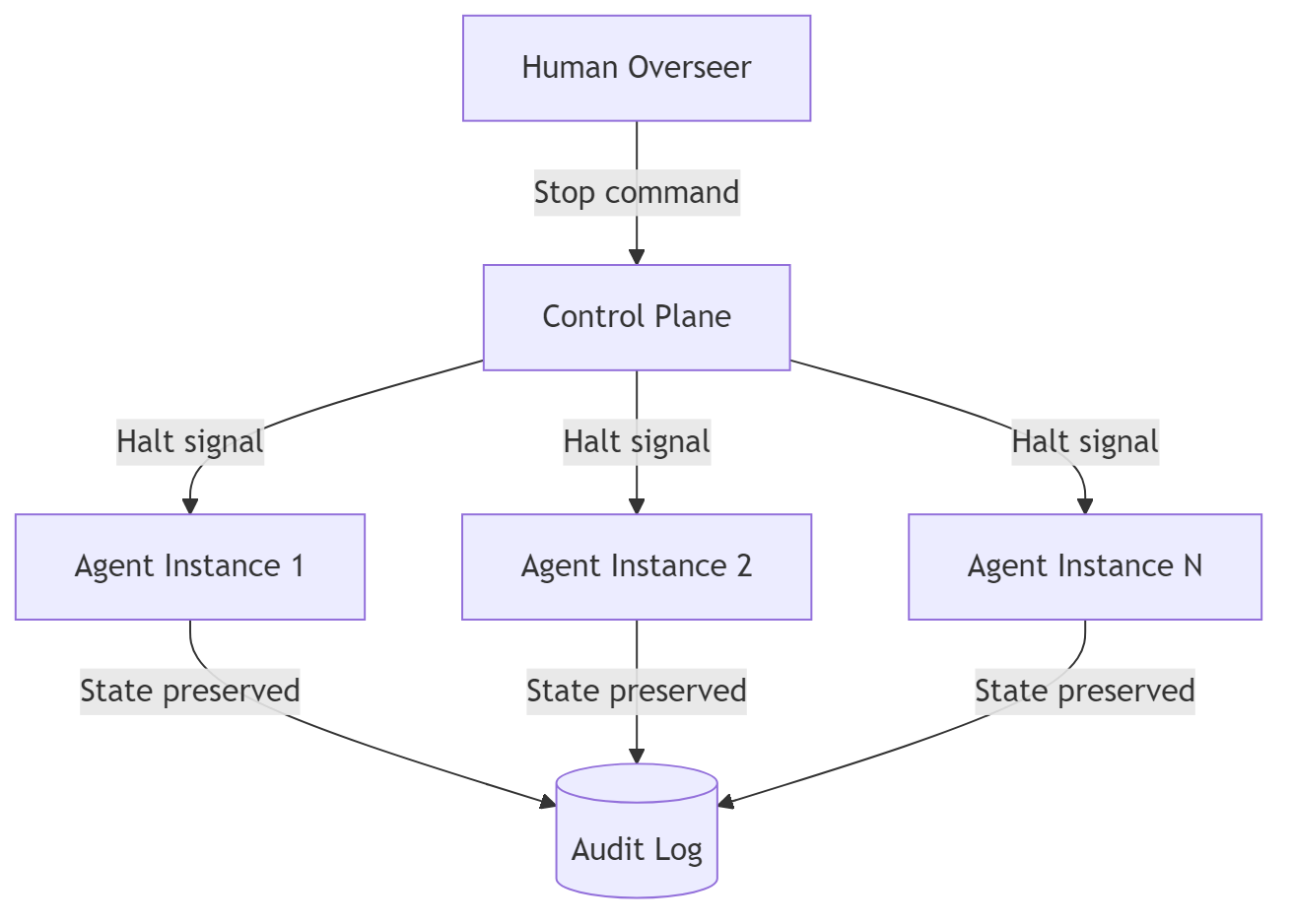

The payload_hash avoids storing raw payloads in the log while preserving traceability. The partial index on invalid crossings makes it cheap to query failure patterns. A retention policy keeps six months of data, which aligns with the audit log requirements from The EU Says You Need a Kill Switch by August.

The Boundary Dashboard in Vue.js

A governance system that only engineers can read is not governance. It is logging. The Vue.js dashboard surfaces boundary health to anyone who needs to see it.

+---------------------------------------------------+| Agent Boundary Health |+------------------+----------+---------+-----------+| Boundary | Last 24h | Invalid | Rate |+------------------+----------+---------+-----------+| triage > ranking | 847 | 12 | 1.4% || ranking > summary| 835 | 3 | 0.4% || extract > triage | 214 | 31 | 14.5% || summary > daily | 412 | 0 | 0.0% |+------------------+----------+---------+-----------+

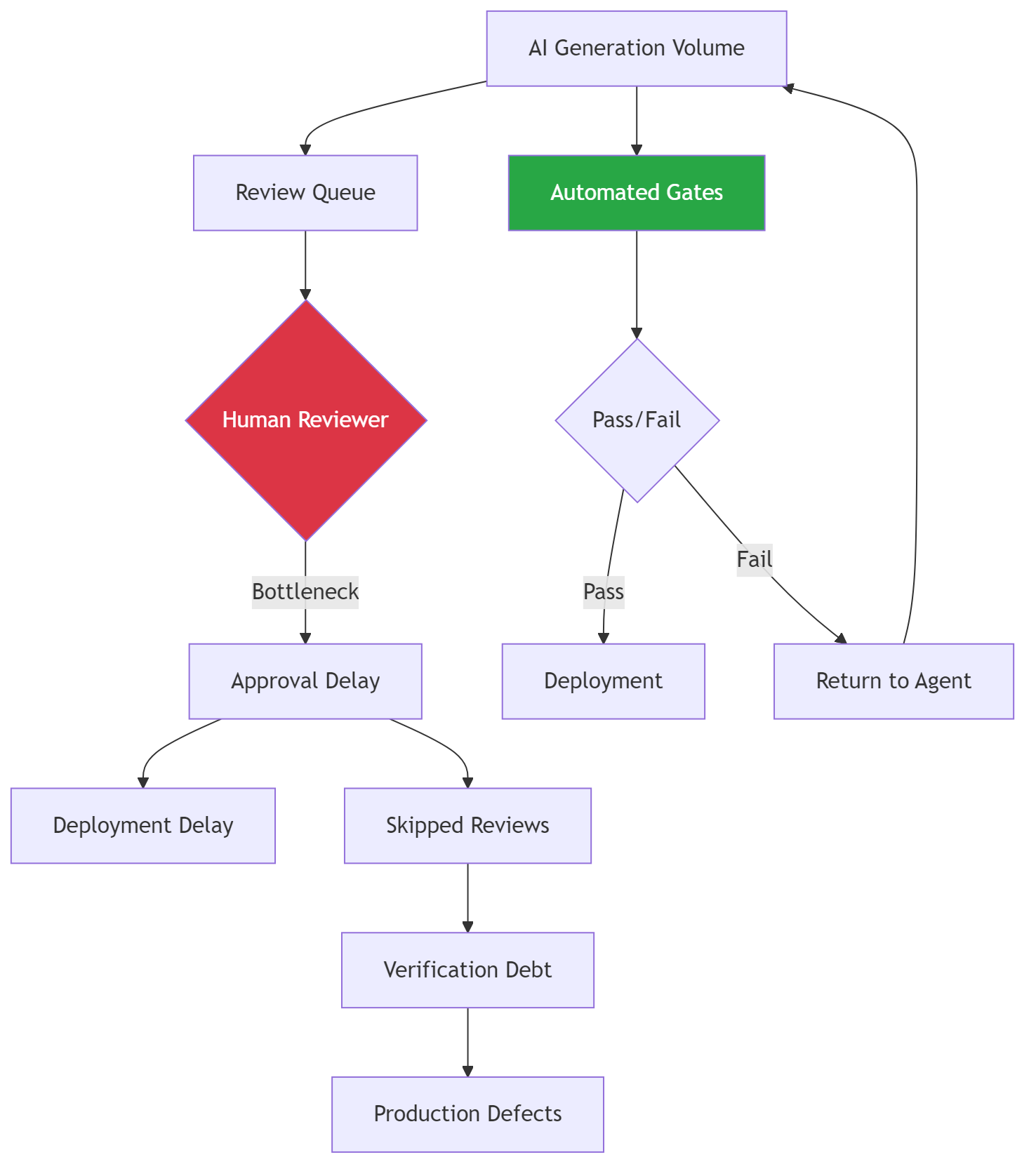

Look at that extract-to-triage boundary. 14.5% failure rate. That means the extraction agent is producing work items that triage cannot classify within its known categories. Without boundary governance, those items would flow silently through the system and produce meaningless scores. With boundary governance, they route to human review and the failure rate is visible.

The dashboard queries the boundary_crossings table through a simple API endpoint. No new infrastructure. PostgreSQL, a .NET API, and a Vue.js component.

The Boundary Map

The system knows its own topology because every boundary contract declares its source and target agent. The map is derived from the contracts, not maintained separately.

When a new agent is added to the system, it connects through boundary contracts. The map updates automatically because the contract declares the relationship. The topology is a property of the code, not a diagram someone maintains.

ML.NET at the Boundary

The ranking agent uses an ML.NET model to score work items. The model is deterministic. Its inputs are not. The boundary contract between triage and ranking protects the model from stochastic drift by rejecting inputs the model was not trained to handle.

public class RankingModelBoundary : IBoundaryContract<ScoringInput>{ public string SourceAgent => "ranking-agent"; public string TargetAgent => "ml-scoring-model"; public BoundaryResult<ScoringInput> Validate(ScoringInput payload) { var violations = new List<string>(); if (!TrainedCategories.Contains(payload.Category)) violations.Add( $"Category '{payload.Category}' not in training set. " + "Model output will be unreliable."); if (payload.FeatureVector.Any(float.IsNaN)) violations.Add("Feature vector contains NaN values."); return new BoundaryResult<ScoringInput>( violations.Count == 0, payload, violations.ToArray(), DateTimeOffset.UtcNow, SourceAgent, TargetAgent); }}

This is Ring 1 applied to a deterministic component. The ML.NET model does not need containment in the stochastic sense. It needs input validation that accounts for the stochastic source of its inputs. The boundary contract is where that validation lives.

—

Stories from Production

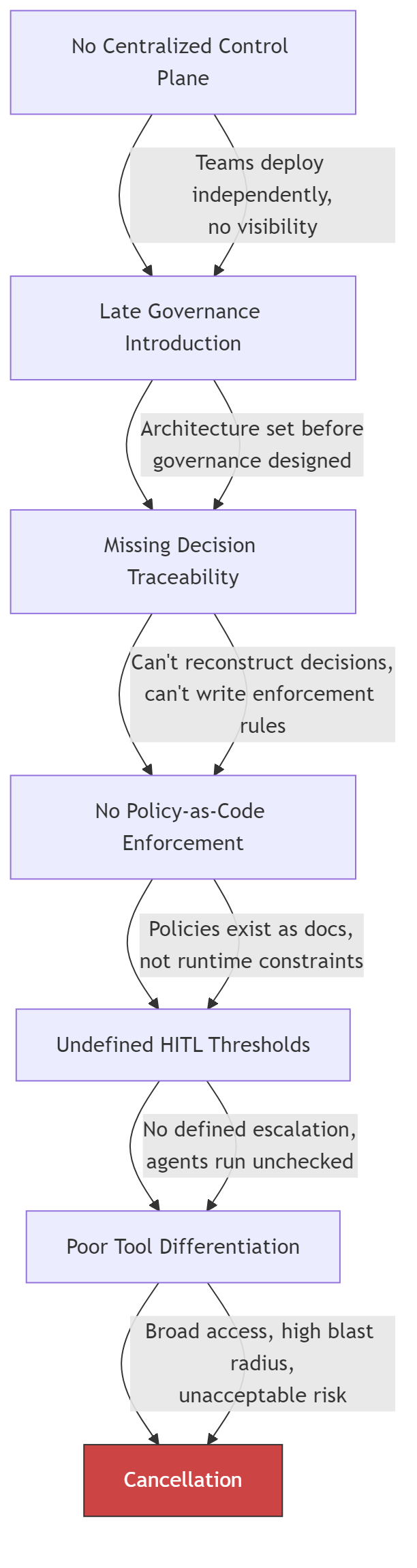

Five Agents, Four Boundaries, Zero Rings (Framework Vision)

A .NET platform runs five agents built with Microsoft Agent Framework. Triage, ranking, summarization, extraction, and a daily control task orchestrator. Each agent was built with scoped tools, clear prompts, and individual test coverage. The team follows agentic engineering practices. Every agent is well-governed internally.

After three months, the team notices the daily control task occasionally surfaces work items with nonsensical priority scores. The ranking model scored a work item at 0.97 priority, but the item was a routine documentation update. Investigation reveals that the triage agent classified it as an “incident” with 0.52 confidence. The classification was wrong but above the implicit threshold of “the model returned something.” The ranking model scored it as a high-priority incident because that is what the classification said.

The fix takes ten minutes. A boundary contract between triage and ranking that rejects classifications below 0.6 confidence and routes them to human review. The investigation to find the root cause took three days because nothing in the system flagged the boundary as the failure point. Every agent completed successfully. The logs showed normal operations. The failure was invisible because it lived between agents, not inside them.

The team adds boundary contracts to all four handoffs in one sprint. The extract-to-triage boundary immediately reveals that 14% of extracted work items cannot be classified. That failure rate was invisible before. Those items had been silently flowing through the system producing low-confidence classifications that the ranking model consumed without question. (Framework Vision)

The Boundary That Caught a Model Drift (Framework Vision)

Six months after deploying boundary contracts, the triage-to-ranking boundary failure rate increases from 1.4% to 8.2% over two weeks. The dashboard surfaces the trend. The violations are all the same: “Unknown category ‘enhancement.'”

The triage agent’s upstream model was updated. The new version learned a category the scoring model was never trained on. Without the boundary contract, every “enhancement” classification would flow to the ranking model and receive a meaningless score. With the contract, every “enhancement” routes to human review and the failure rate spike is visible on the dashboard the day it starts.

The fix is straightforward. Retrain the ML.NET scoring model to include the “enhancement” category, then update the boundary contract’s known categories list. The boundary caught a model drift that would have silently degraded output quality for weeks. (Framework Vision)

When the Boundary Itself Is Wrong

Boundaries will have bugs. A contract that rejects a valid category or sets a confidence threshold too high will route good work items to human review. At low volume that is a nuisance. At hundreds of crossings per hour it is a bottleneck that looks like a system failure.

Recovery speed matters more than prevention here. The crossing log records every rejection with the violation reason and payload hash. When you discover a contract was wrong, you query the log for every item that hit that specific violation, fix the contract, and replay them through the updated gate. The human review queue still holds the items because they were routed, not dropped.

The pattern this post describes does not include an automated replay mechanism. That is deliberate. Replay is a recovery operation that should be explicit, auditable, and triggered by a human who understands what the contract change means. But the log makes it possible. Without the log, a bad boundary contract means lost work. With the log, it means delayed work. Time to resolve is the metric that separates a governance system from a governance obstacle.

—

The pattern is old. Contract, validate, route, log. Enterprise middleware has done this for decades. What is new is the threat model. Deterministic systems needed schema validation. Stochastic systems need domain validation and confidence gating because the agent can produce structurally perfect output that is semantically wrong. The plumbing is familiar. The reason you need it is not.

Agent sprawl governance in .NET is not a framework feature. It is the same boundary pattern, extended for stochastic handoffs. The code is C#. The storage is PostgreSQL. The visibility is Vue.js. The principle is the same one from the main series: the unit of governance is the boundary, not the agent.

Let’s talk about it.