Tagged: enterprise

Agent Sprawl Is the New Shadow IT.

A friend sent me a post about AI sprawl across enterprise tooling. The argument was that organizations are paying for the same value many times over. Across an organization some people summarize emails in Outlook, sales team summarizes same email in the CRM, PMO summarizes the email in the project management tool. Three subscriptions, three vendors, three different models delivering the same value for the same organization on the same input. The potential for waste is real.

I want to be straight with you. I think the sprawl is a maturity problem, not a design problem. Everyone is trying to find leverage with AI right now. Teams are experimenting. Departments are buying tools that solve immediate pain. Nobody coordinated because nobody knew what would work six months ago. That is not negligence. That is what early adoption looks like in every technology wave. As things settle and consolidate, the duplicate subscriptions will compress. The market is already moving that way.

But here is the part that will not consolidate on its own. Even after the vendor landscape settles, even after the organization standardizes on fewer tools, the duplicate capability problem persists at the agent level. Three different workflows that each classify a work item using three different prompts with three different confidence thresholds. Five agents that each summarize context in slightly different ways because five operators made five independent decisions about what “summarize” means. The tools consolidate. The capabilities inside them do not, because nobody governed the boundary where one agent’s output becomes another agent’s input.

Gartner predicts 40% of enterprise applications will feature task-specific AI agents by the end of 2026. For the average organization, that translates to 50 or more specialized agents. Customer service agents. Code generation agents. Data pipeline agents. Document processing agents. Scheduling agents. Each one deployed by a different team, with different tooling, different containment posture, and different governance assumptions. They Can Watch. They Cannot Stop. showed what happens when organizations skip the containment rings for a single class of agent. Now multiply the problem.

69% of organizations suspect their employees already use prohibited AI tools. The agents are not waiting for an enterprise rollout. They are arriving through the same channel that every previous wave of unauthorized technology used: individual teams solving immediate problems without waiting for centralized approval.

History Repeats

The enterprise technology industry has seen this before. In 2018, Robotic Process Automation promised to automate repetitive tasks without changing underlying systems. Adoption was fast. Individual departments built bots to handle invoice processing, data entry, report generation. The bots worked. The ROI was immediate and visible. Within two years, large organizations had hundreds of RPA bots running across dozens of departments with no central inventory, no shared governance, and no unified monitoring.

Unframe AI drew the comparison directly: decentralized adoption, quick wins, proliferation, fragmentation, expensive consolidation. The RPA consolidation crisis cost organizations millions and took years. Bots broke when underlying systems changed. Nobody knew which bots existed, what they accessed, or who was responsible for maintaining them. The technical debt was invisible until it was not, and by then the cleanup was more expensive than the original implementation.

Agent sprawl follows the same trajectory but compresses the timeline. RPA bots were deterministic. They did exactly what they were scripted to do, which made them fragile but predictable. AI agents are stochastic. They interpret instructions, make decisions, and adapt to context. A misbehaving RPA bot runs the wrong script. A misbehaving AI agent improvises. The blast radius per agent is larger, the number of agents is growing faster, and the governance infrastructure is thinner.

Organizational Cognitive Debt

Your Code Works. Nobody Knows Why. described cognitive debt as the gap between a system’s structure and a team’s understanding of that structure. Agent sprawl creates cognitive debt at the organizational level. When fifty agents operate across an enterprise, the question is not whether any individual agent is governed. The question is whether anyone can describe the complete system of agents, their interactions, their data flows, and their combined effect on the business.

Most organizations cannot. The agents were deployed independently. The customer service team chose one vendor. The engineering team built their own. The finance team embedded agents into existing SaaS tools. Each team can describe their own agents. Nobody can describe the whole. And nobody knows what happens when these agents interact. When the customer service agent updates a record, and the data pipeline agent processes that record, and the reporting agent summarizes the result, the combined behavior is an emergent property of three independent systems that were never designed to work together.

This is shadow IT at the capability level. Traditional shadow IT was about unauthorized applications. Agent sprawl is about unauthorized capabilities. An employee does not install a new application. They enable an AI feature inside an application the organization already approved. The application is sanctioned. The agent capability within it is not. The IT asset inventory shows zero unauthorized tools. The actual environment contains agents that nobody is tracking.

The Unit of Governance Is Not the Agent

CIO magazine identified three pillars for taming agent sprawl: orchestration, governance, and observability. These are correct as categories. The implementation question is where those pillars sit. If orchestration, governance, and observability are built per-agent, the organization has a governed collection of individual agents. If they are built per-boundary, the organization has a governed system.

The distinction matters because agent-level governance does not compose. Ten individually governed agents are not a governed system. They are ten systems that happen to share an organization. The interactions between agents, the data that flows from one to another, the cumulative effect of their combined actions on business processes, none of this is captured by governing each agent in isolation.

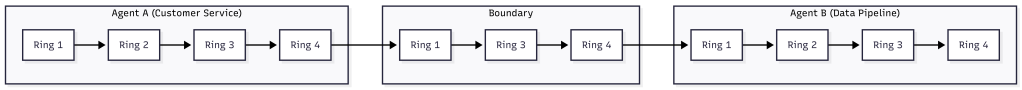

How Agents Stay in Bounds defined containment at the boundary, not the agent. Ring 1 scopes the inputs an agent receives. Ring 2 isolates the environment it runs in. Ring 3 validates its outputs. Ring 4 gates its promotion. These rings apply at every boundary in a multi-agent system, not just the boundary around each individual agent. The handoff from one agent to another is a boundary. The data flow between agent-enabled applications is a boundary. The integration point where an agent’s output becomes another agent’s input is a boundary.

Governing the boundaries means that even when a new agent appears in the ecosystem, its interactions with existing agents are already constrained. The new agent’s output passes through a boundary ring before it becomes input for another agent. The organization does not need to re-govern the entire system every time someone deploys a new agent. The boundaries hold.

The Inventory Problem

Before you can govern agents at the boundary, you need to know the boundaries exist. This is the discovery problem, and it is harder than it sounds. A 2026 Deloitte analysis of the multi-agent market estimated the agentic AI orchestration market will reach $35 billion by 2030. That money is not going to centralized platforms. It is being distributed across vendors, internal tools, and embedded capabilities in existing software.

The first step is an inventory. Not an inventory of agents, because agents are embedded in applications and invisible to traditional asset management. An inventory of capabilities. Which applications in the environment have AI agent features enabled? Which of those features can take autonomous action? Which can access data from other systems? Which can modify records, send communications, or trigger workflows?

This is the same audit structure from They Can Watch. They Cannot Stop., extended from individual agents to the organizational ecosystem. The four questions are the same. Can you define and enforce what each agent receives as input? Can you isolate each agent’s execution environment? Can you validate each agent’s output against measurable criteria? Can you prevent each agent from promoting its actions without approval? Apply those questions at the boundary between agents and you have a multi-agent governance audit.

- Enumerate every application in the environment with AI agent capabilities, including embedded copilots and SaaS features.

- For each, identify whether the agent can take autonomous action, access data beyond its primary function, or trigger downstream processes.

- Map the data flows between agent-enabled applications. Every flow is a boundary.

- Apply the four-ring audit to each boundary. Can you scope the input at the handoff? Can you isolate the execution? Can you validate the output? Can you gate the promotion?

- Score each boundary as governed, partial, or ungoverned. The ungoverned boundaries are your risk surface.

Organizations that run this audit typically discover more boundaries than they expected. The agent count may be manageable. The boundary count is where the governance gap hides.

RPA’s Lesson

Unframe AI’s comparison to RPA includes an observation that applies directly: “Agents aren’t the unit of value. Outcomes are.” The organizations that survived the RPA consolidation crisis were the ones that shifted from managing individual bots to managing business outcomes that bots contributed to. They built centralized orchestration, unified governance, and shared monitoring. They treated the collection of bots as a system rather than a portfolio of independent tools.

The agent version of this lesson is the same. The organization that governs fifty agents as fifty individual tools will face the same consolidation crisis RPA created. The organization that governs fifty agents as a system with defined boundaries, scoped handoffs, and unified observation will not. The difference is not the number of agents. It is whether the governance model scales with the agent count or collapses under it.

Agentic Engineering Is a Practice. AgenticOps Is the Infrastructure. made this argument for individual developers: practice degrades under pressure, infrastructure holds. The argument is identical at organizational scale. An organization that relies on each team to govern their own agents will see governance degrade as deployment velocity increases. An organization that builds governance into the boundaries between agents will see governance hold regardless of how many agents individual teams deploy.

The agents are already here. The sprawl has already started. The RPA playbook says the consolidation crisis arrives in 18 to 24 months. The question is whether organizations build the boundaries now or pay for the cleanup later.

Let’s talk about it.

They Can Watch. They Cannot Stop.

An agent misbehaves. The dashboard lights up. An operator sees exactly what is happening: the scope violation, the unauthorized data access, the lateral movement across systems. And then nothing. No kill switch. No isolation. No way to stop what they are watching unfold in real time. This is not a hypothetical. The Kiteworks Data Security Forecast surveyed 225 security, IT, and risk leaders across ten industries and eight regions, and the results say this is the default state of enterprise AI governance. Organizations built the screens. They did not build the brakes.

The four containment rings from the AgenticOps model predict exactly where the failure occurs and in what order to fix it. Observation is one ring. Organizations treated it as all four.

The Numbers

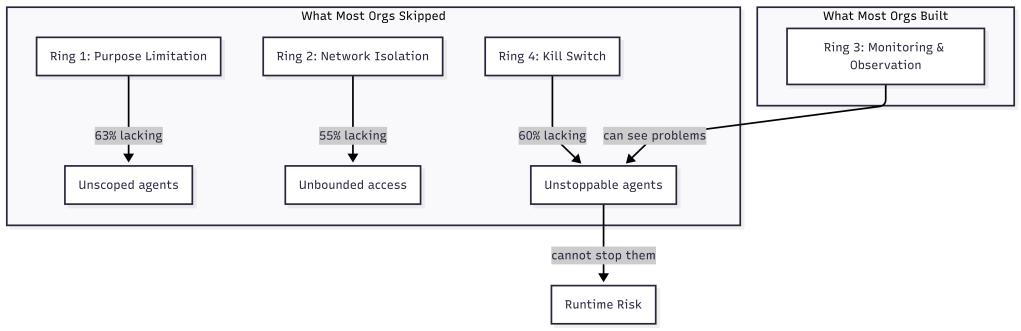

63% of organizations cannot enforce purpose limitations on their AI agents. They know what agents should do. They cannot technically prevent other actions. 60% cannot terminate a misbehaving agent. They can see the agent doing something unexpected. They cannot stop it. 55% cannot isolate AI systems from broader network access. The agent has access to systems beyond its intended scope, and the organization has no mechanism to restrict that access after deployment.

Meanwhile, 58% report continuous monitoring. 59% report human-in-the-loop oversight. 56% report data minimization practices. They can observe their agents, log what agents do, and flag anomalies.

The gap between observation and containment is 15 to 20 percentage points. The security community calls this the governance-containment gap. Most organizations can see an AI agent doing something unexpected and cannot prevent it from exceeding its authorized scope, shut it down, or isolate it from sensitive systems.

Mapping the Gap to the Four Rings

The four containment rings from How Agents Stay in Bounds provide a structural explanation for this gap. Each ring addresses a different containment boundary. The Kiteworks data reveals which rings organizations invested in and which they skipped.

Ring 1 is constrain inputs: scope what the agent receives before it starts. Purpose limitation is a Ring 1 control. It defines what the agent is allowed to do by limiting the context, tools, and data it can access. 63% of organizations cannot enforce this ring. They give agents broad access and rely on instructions to stay in scope. How Agents Stay in Bounds described this as the difference between policy and infrastructure. Policy says “only access these systems.” Infrastructure says “these are the only systems that exist in your environment.” Most organizations chose policy.

Ring 2 is constrain environment: physically isolate the agent’s execution. Network isolation is a Ring 2 control. It prevents the agent from reaching systems outside its intended scope regardless of what it attempts. 55% cannot enforce this ring. The agent runtime has network access to the broader environment, and the only barrier is the agent’s instructions not to use it. OpenClaw Is Not an AI Assistant demonstrated how Docker sandboxes, tool sandboxing, and network allowlists create physical containment around agent runtimes. The organizations in this survey do not have that infrastructure.

Ring 3 is validate outputs: evaluate what the agent produced before it takes effect. This is where the 58% monitoring figure lives. Organizations invested here. They can observe agent behavior, flag anomalies, and log actions for review. This ring is necessary but insufficient on its own. Observation without the ability to act on what you observe is surveillance, not governance.

Ring 4 is gate promotion: prevent unverified work from reaching production systems. Kill switches are a Ring 4 control. They prevent or reverse the promotion of agent actions that violate criteria. 60% of organizations cannot terminate a misbehaving agent. They can see the agent is misbehaving through their Ring 3 investment. They cannot stop it because they never built Ring 4.

| Ring | Control | Capability | Organizations Lacking |

| 1 | Constrain inputs | Purpose limitation | 63% |

| 2 | Constrain environment | Network isolation | 55% |

| 3 | Validate outputs | Continuous monitoring | 42% |

| 4 | Gate promotion | Kill switch / termination | 60% |

The pattern is clear. Organizations invested in Ring 3 and skipped Rings 1, 2, and 4. They built the middle of the defense and left the boundaries open. This is precisely what How Agents Stay in Bounds predicted: every ring you skip is a ring you inherit as runtime risk.

Why Organizations Built Observation First

The investment pattern is not irrational. Observation is easier to implement than containment. Monitoring can be added to existing agent deployments without redesigning the architecture. Log aggregation, anomaly detection, and human review queues are familiar patterns that security teams already understand from traditional application monitoring.

Containment requires architectural decisions made before deployment. Ring 1 requires scoping the agent’s context at design time. Ring 2 requires sandbox infrastructure that isolates the runtime. Ring 4 requires promotion gates that can halt or reverse agent actions. These are not things you bolt on after the agent is running. They are things you build into the deployment architecture from the start.

You Can Build This. Three Artifacts and a Sandbox. described the build order: constraint first, then agent definition, then gate, then sandbox. That order is deliberate. You define what the agent can do before you define the agent. You define what success looks like before you let the agent run. You build the sandbox before you execute inside it. The organizations in the Kiteworks survey did it the other way around. They deployed agents, then added monitoring, and now they are discovering that monitoring without containment leaves them watching problems they cannot fix.

Government Is in the Worst Position

The Kiteworks data includes a sector breakdown that makes the structural argument even more concrete. Government agencies are in the worst position across every containment metric. 90% lack purpose-binding controls. 76% lack kill switches. A third have no dedicated AI controls at all.

This is not because government agencies are less capable. It is because they face the most acute version of the structural problem. Procurement cycles are long. Architecture decisions are locked into multi-year contracts. The agents are deployed by vendors into environments the agency does not control. Ring 1 requires scoping the agent’s context, but the agency may not have visibility into what context the vendor’s agent receives. Ring 2 requires environmental isolation, but the agent may run in the vendor’s cloud, not the agency’s infrastructure.

The containment model assumed the organization controls the deployment environment. When that assumption breaks, when the agent runtime is managed by a third party, containment becomes a contractual problem, not just a technical one. The four rings still apply, but the implementation shifts from infrastructure configuration to vendor requirements and service-level agreements.

100% Have Agents on the Roadmap

The most striking number in the survey is not about failures. It is about ambition. 100% of organizations surveyed have agentic AI on their roadmap. Zero exceptions. Across 225 organizations, ten industries, eight regions, not a single organization plans to abstain from agent deployment.

This means the governance-containment gap is not a temporary condition that some organizations will avoid. It is the default starting position for every organization that deploys agents. The question is not whether an organization will face this gap. The question is whether they close it before something goes wrong.

What AgenticOps Actually Looks Like introduced three non-negotiables: no generation without defined contracts, no promotion without evaluation, no runtime without observability. The Kiteworks data suggests a fourth: no deployment without containment. Observability alone is not governance. It is the prerequisite for governance, not the thing itself.

Closing the Gap

The gap closes in a specific order. Ring 1 first: define what each agent is allowed to do, not in policy documents but in scoped contexts and tool allowlists. Ring 2 next: isolate the agent’s execution environment so that the boundary is physical, not instructional. Ring 4 last: build promotion gates that can halt or reverse agent actions when evaluation criteria fail. Ring 3, observation, is what most organizations already have. The gap is everything else.

This is the same build order from You Can Build This applied at enterprise scale. Write a constraint. Define the agent. Write a gate. Run it in a sandbox. The artifacts are different when the agent is a customer service bot instead of a code generator, but the pattern is identical. Scope the input. Isolate the environment. Verify the output. Gate the promotion.

The Agents of Chaos study also found that 75% of leaders will not let security concerns slow their AI deployment. This means the gap will not close by slowing down. It will close by building containment infrastructure that runs at deployment speed. How Agents Stay in Bounds made this argument for individual agents. The Kiteworks data makes it for entire organizations.

The model was built to describe this problem. Now the problem has data. The four rings are no longer a conceptual model. They are a diagnostic tool. Map your agent deployments against the four rings. Where you have gaps, you have risk. Where you have all four, you have governance.

The data says most organizations are watching. Watching is not governing. Governing requires the ability to stop what you are watching. That requires infrastructure, not observation.

Let’s talk about it.