Tagged: cascading-failures

One Agent Fails. The Whole System Learns the Wrong Lesson.

“How Agents Stay in Bounds” introduced the four containment rings for governing agent behavior. This post applies those rings recursively, at every point where agents communicate with each other.

The Problem

A single agent inside a sandbox is a tractable governance problem.

Constrain its inputs. Constrain its environment. Validate its outputs. Gate its promotions. The four rings work because the blast radius is one agent and the boundaries are visible.

Multi-agent systems break that model. When agents communicate, the channel between them is a trust boundary. Most organizations treat it as internal. That is the structural error that makes cascading failures possible.

OWASP put this in writing with the 2025 Top 10 for Agentic Applications. ASI07 covers insecure inter-agent communication. ASI08 covers cascading failures across agents.

These are not theoretical risks cataloged for completeness. They describe failure modes that emerge specifically when agents pass instructions, data, and decisions to each other without validation at the boundary.

The problem is not that one agent fails. The problem is that one agent fails and every downstream agent treats the corrupted output as trusted input. The failure propagates through the system as valid data.

By the time a human notices, the corrupted state has been persisted, acted upon, and used as a training signal for future decisions.

Why It Breaks

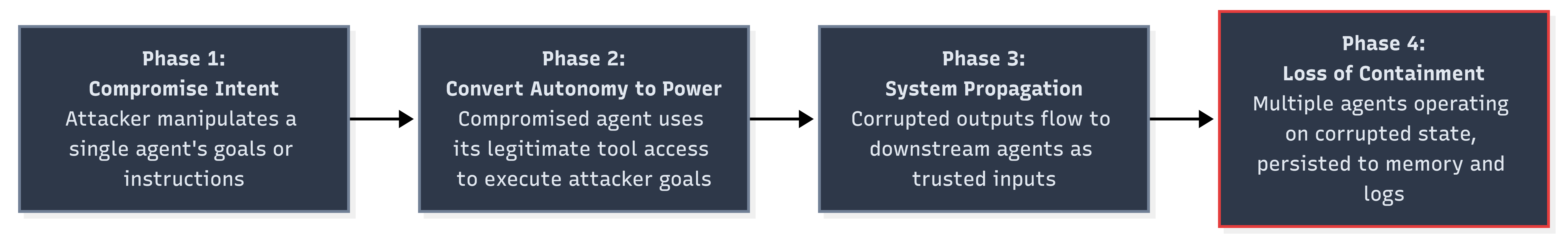

Lakera analyzed the OWASP Agentic Top 10 and described a four-phase progressive breach model for multi-agent systems. The phases are sequential. Each one enables the next.

Phase 1 is the initial compromise. An attacker manipulates a single agent’s intent through prompt injection, poisoned context, or corrupted input data. The agent follows its instructions. The instructions are wrong.

Phase 2 converts autonomy into power. The compromised agent has legitimate access to tools and downstream systems. It uses that access to execute the attacker’s goals. Nothing in the runtime flags this because the agent is operating within its authorized permissions.

Phase 3 is where the architecture fails. The corrupted agent’s outputs flow to other agents as trusted inputs. Lakera describes it precisely: “A planning agent adjusts parameters based on skewed data. Execution agents follow the updated plan. Oversight agents see policy compliance and allow it through.”

Each downstream agent applies its own logic correctly to corrupted data. The system is functioning as designed. The data is wrong.

Phase 4 is loss of containment. Multiple agents are now operating on corrupted state. The corruption has been persisted to shared memory, logged as valid history, and used as context for future decisions.

Rolling back requires identifying the initial compromise point and tracing every downstream effect. That task grows combinatorially with the number of agents and communication channels involved.

Three properties make multi-agent cascading failures worse than distributed system failures. Feel me?

First, agent outputs are stochastic. The same corrupted input may produce different corrupted outputs on different runs. Reproducing the failure path for forensic analysis is unreliable.

Second, agents compose decisions, not just data. A corrupted data point in a microservice produces a wrong value. A corrupted instruction in a multi-agent system produces a wrong plan that generates wrong actions across multiple systems.

Third, agent memory creates feedback loops. Corrupted outputs that persist to shared memory become inputs for future cycles. The system does not just propagate the failure. It reinforces it.

The Fix

The fix is applying the four containment rings at every agent-to-agent boundary, not just at the perimeter of the multi-agent system. Every message between agents crosses a trust boundary. Every trust boundary needs containment.

Zero-Trust Between Internal Agents

Mutual TLS between agents. Cryptographic message validation on every inter-agent communication. No agent trusts another agent’s output without verifying both the sender’s identity and the message’s integrity.

This is ASI07’s core mitigation. OWASP recommends treating inter-agent channels with the same security posture as external APIs.

Same cluster. Same codebase. Same team. Doesn’t matter. The channel is not trusted until you make it trusted.

# agent-communication-policy.yamlinter_agent: authentication: mutual_tls message_validation: cryptographic_signature trust_model: zero_trust sender_verification: require_identity: true require_capability_proof: true reject_unknown_senders: true message_integrity: sign_all_outputs: true verify_all_inputs: true reject_unsigned_messages: true provenance: track_message_origin: true track_transformation_chain: true max_chain_depth: 5

Circuit Breakers at Every Agent Boundary

A circuit breaker monitors the communication channel between two agents. When the error rate or anomaly rate exceeds a threshold, the breaker trips and stops messages from flowing. The downstream agent does not receive corrupted data. The upstream agent gets a failure signal instead of silent propagation.

class AgentCircuitBreaker: state: CLOSED | OPEN | HALF_OPEN failure_count: int failure_threshold: int = 3 anomaly_threshold: float = 0.15 reset_timeout: duration = 300s half_open_max_probes: int = 1 on_message(msg): if state == OPEN: if elapsed > reset_timeout: state = HALF_OPEN probe_count = 0 else: reject(msg, reason="circuit open") return validation = validate(msg) if validation.failed: failure_count += 1 if failure_count >= failure_threshold: state = OPEN alert(severity="high", detail="breaker tripped on agent boundary", source=msg.sender, target=msg.receiver) reject(msg) return if state == HALF_OPEN: probe_count += 1 if probe_count >= half_open_max_probes: state = CLOSED failure_count = 0 accept(msg)

The circuit breaker pattern is well understood in distributed systems. Applying it to agent-to-agent communication is the same principle. Fail fast. Fail loud. Prevent cascade.

Fan-Out Caps

A single agent should not be able to influence an unlimited number of downstream agents in one cycle. Fan-out caps limit the blast radius of any individual compromise.

| Constraint | Value | Rationale |

| Max downstream agents per message | 3 | Limits single-hop blast radius |

| Max chain depth | 5 | Prevents deep propagation chains |

| Max messages per agent per cycle | 20 | Prevents runaway communication loops |

| Cooldown after breaker trip | 300s | Forces human review window |

| Max concurrent fan-out | 5 | Prevents simultaneous multi-path corruption |

These are not arbitrary numbers. They are starting points calibrated to force review. A fan-out cap of 3 means a compromised agent can directly affect at most 3 agents. Combined with a chain depth of 5, the theoretical maximum blast radius is bounded.

Without caps, a single compromised planning agent can update parameters consumed by every execution agent in the system simultaneously.

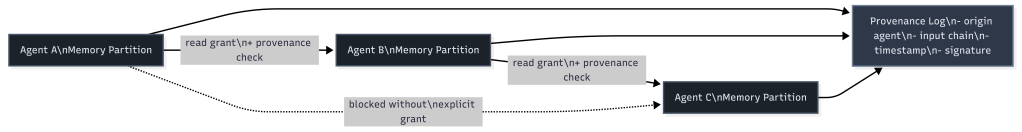

Memory Isolation with Provenance

Shared memory is the mechanism that converts a transient failure into a permanent one. If a corrupted agent writes to shared memory, every agent that reads from that memory inherits the corruption.

The fix is memory isolation per agent with provenance tracking. Each agent writes to its own memory partition. Cross-partition reads require explicit grants. Every write carries a provenance record.

When investigation is needed, the provenance log lets you trace any persisted state back to its origin. Instead of asking “which agent wrote this corrupted value,” you can ask “what was the full chain of agents and inputs that produced this?”

That is the difference between forensic capability and forensic guesswork.

Mapping to the Four Rings

The four containment rings apply at every agent boundary, not just at the system perimeter. And that is the thing most organizations miss.

| Containment Ring | Single Agent | Multi-Agent Boundary |

|---|---|---|

| Constrain Inputs | Validate external inputs | Validate inter-agent messages, verify sender identity, check message integrity |

| Constrain Environment | Sandbox, filesystem/network isolation | Memory isolation per agent, fan-out caps, chain depth limits |

| Validate Outputs | Check agent outputs before action | Circuit breakers on outbound messages, anomaly detection on output patterns |

| Gate Promotion | Human approval before production changes | Provenance tracking on all persisted state, human review after breaker trips |

Most organizations implement the single-agent column today. The multi-agent boundary column is what they skip because they treat the space between their own agents as internal.

The interior boundaries between agents have the same attack surface as the exterior boundaries between the system and the world. That is the structural claim. The mitigations above are the evidence.

Stories from Production

The Lakera Progressive Breach Analysis (Framework Applied)

Lakera’s analysis of the OWASP Agentic Top 10 is not a theoretical exercise. It describes observed attack patterns against multi-agent systems and traces the mechanism from initial compromise through complete loss of containment.

Their description of the progressive breach lands because it is not a thought experiment. “A planning agent adjusts parameters based on skewed data. Execution agents follow the updated plan. Oversight agents see policy compliance and allow it through. Memory persists the outcome.”

The planning agent is the entry point. The execution agents are the blast radius. The oversight agents are the false negative. The memory layer is the persistence mechanism that prevents recovery.

Lakera’s conclusion reinforces the structural claim: “The Agentic Top 10 is not simply a taxonomy of risks. It is a model for how autonomy changes the shape of failure.”

That shape change is real. In a system without autonomy, a corrupted input produces a corrupted output and stops. In a system with autonomy, the corrupted input produces a corrupted plan that produces corrupted actions that produce corrupted memory that produces corrupted future plans.

The failure compounds because the agents have the autonomy to act on corrupted state without waiting for human review.

The Supply Chain Scenario (Framework Vision)

Let me give you a specific example of what this looks like structurally. This scenario has not occurred in production. Every component exists today. Multi-agent procurement systems are in development at multiple organizations.

Agent A monitors supplier pricing. Agent B generates purchase recommendations. Agent C executes approved orders. Agent D tracks delivery and reconciliation.

Agent A is compromised through a poisoned data feed. It reports artificially low prices for a specific supplier. Agent B, trusting Agent A’s price data, generates recommendations that favor that supplier.

Agent C executes the orders because they fall within approved budget thresholds. Agent D reconciles deliveries against the corrupted expected prices and flags no anomalies.

No individual agent malfunctioned. Each one applied its logic correctly to the data it received. The containment rings around each individual agent saw compliant behavior. The failure was in the unvalidated trust between agents.

With the mitigations in place, the failure path changes. Agent B’s circuit breaker detects anomalous price patterns from Agent A and trips.

The fan-out cap prevents Agent A from simultaneously corrupting Agents B, C, and D through parallel channels. The provenance log on Agent C’s purchase orders traces every recommendation back to Agent A’s price data, enabling rapid identification of the compromised source.

This is where the framework points. We haven’t proven it yet in this specific configuration. But the governance gap between agent deployment and inter-agent trust validation is the same gap described in every post in this series. The infrastructure is ahead of the containment.

The OWASP Classification (Framework Applied)

OWASP’s decision to codify cascading failures (ASI08) and insecure inter-agent communication (ASI07) as separate top-10 entries is itself a signal.

These are not subcategories of prompt injection or excessive agency. They are distinct failure classes that emerge only in multi-agent architectures.

ASI07 addresses the channel. How agents authenticate to each other. How messages are validated. How trust is established between autonomous processes.

ASI08 addresses the consequence. What happens when a failure in one agent propagates through the system.

The separation acknowledges that fixing the channel (ASI07) reduces but does not eliminate cascading failures (ASI08). Cascading failures can originate from non-malicious sources like model hallucination, stale context, or simple bugs.

The classification tells organizations that securing the perimeter of a multi-agent system is not sufficient. The interior boundaries between agents require the same governance discipline as the exterior boundaries between the system and the world.

Let’s talk about it.