Tagged: agentic-engineering

Agentic Engineering Is a Practice. AgenticOps Is the Infrastructure.

In early 2026, multiple people working independently arrived at the same conclusion about how professional developers should work with AI coding agents. They converged on a term: agentic engineering. The practices they describe are correct. But practices live in behavior, and behavior degrades under pressure. Nothing in the agentic engineering model enforces the practice when the practitioner is tired, rushed, or outnumbered.

The Industry Converged

In early 2026, Andrej Karpathy proposed the term “agentic engineering” to describe what professional developers actually do when they work with AI coding agents. Addy Osmani wrote the definitive guide. IBM published a formal definition. Simon Willison drew the line that separates it from vibe coding: “I think the borderline is when you take responsibility for the code, and stop blaming the LLM for any mistakes.”

The core claims are familiar. Humans own architecture and quality. Agents handle implementation. Testing is the single biggest differentiator between disciplined and undisciplined AI-assisted work. Specifications before prompts produce better output than prompts alone. These are not new observations. I’ve Never Fully Understood the Systems I Work In described the same boundary between human judgment and agent execution. How Agents Stay in Bounds formalized it as four rings of containment. The language differs. The structure is the same.

What makes this convergence meaningful is not that smart people agree. It is that they agree independently, from different starting points, using different evidence. When multiple observers reach the same conclusion through different paths, the conclusion is likely structural rather than stylistic.

The Practice Is Necessary But Not Sufficient

Agentic engineering describes how a developer should work. Write specifications first. Review agent output with the same rigor as human code. Test relentlessly. Maintain architectural discipline. These are practices, and they are correct. Osmani’s observation that “agentic engineering actually rewards strong fundamentals more than traditional development” matches exactly what I found building the system described in You Can Build This. Three Artifacts and a Sandbox.

The problem is that practices depend on practitioners. A developer following agentic engineering principles produces governed output. A developer who skips the specification step, or merges without reviewing the diff, or runs without tests, produces ungoverned output. The practice has no mechanism to enforce itself. It relies on discipline, and discipline degrades under pressure. Deadlines compress. Scope expands. The careful developer who reviews every diff at 10 AM rubber-stamps the last three at 6 PM.

Any approach that lives in behavior rather than infrastructure has this limitation. Verification Beats Debugging made the same argument in the AgenticOps Applied series: the fix is not better discipline, it is verification pipelines that make discipline unnecessary. What matters is what happens when developers do not follow the practice, because eventually they will not.

Where Agentic Engineering Stops

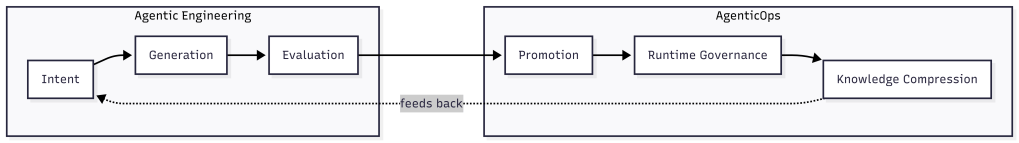

The agentic engineering literature covers three activities well. Intent specification: write a design document or task description before prompting. Agent generation: let the agent implement within a scoped context. Evaluation: review the output, run tests, verify correctness. These map to the first three layers of the AgenticOps model from What AgenticOps Actually Looks Like: Intent, Agent Generation, and Evaluation.

The literature is largely silent on what happens after evaluation. Promotion, the controlled movement of verified work into production environments, is rarely addressed. Runtime governance, the observation and constraint of agent behavior in live systems, appears only in security-focused discussions. Knowledge compression, the systematic reduction of system complexity into navigable artifacts, is almost entirely absent.

These are layers four through six. They are the layers that turn a development practice into an operational system. Without them, agentic engineering produces high-quality work in development and hopes it stays that way in production.

The feedback loop matters. Knowledge compression feeds back into intent. The compressed understanding of how the system behaves in production shapes the next round of specifications. Without that loop, each development cycle starts fresh. With it, each cycle compounds on what the system learned about itself.

Containment Is Not a Practice

The four containment rings from How Agents Stay in Bounds illustrate the difference most clearly.

- Constrain inputs.

- Constrain environment.

- Validate outputs.

- Gate promotion.

These are not things a developer does. They are things the system enforces.

Ring 1, constraining inputs, means the agent receives a scoped context defined by skill files and schemas. The developer did not decide at runtime what to include. The constraint existed before the agent started. Ring 2, constraining the environment, means the agent runs in a sandbox that physically prevents access to anything outside the workspace. The developer did not have to trust the agent not to wander. The environment made wandering impossible. Ring 3, validating outputs, means automated gates evaluate the result against measurable criteria. The developer did not have to judge quality from a diff. The gate returned a score. Ring 4, gating promotion, means nothing moves forward without evidence. The developer did not have to remember to check. The system refused to proceed without passing the gate.

OpenClaw Is Not an AI Assistant demonstrated this with three isolation layers and multiple containment rings around a real agent runtime: Docker sandboxes, tool sandboxes, allowlists, network restrictions, and human approval gates. The containment was not a developer practice. It was infrastructure configuration. The agent could not violate the boundary because the boundary was physical, not procedural.

Agentic engineering asks the developer to be disciplined. AgenticOps builds the system so that discipline is the default and violation is structurally difficult. The distinction is the same one from How Agents Stay in Bounds: policy says “don’t.” Infrastructure says “can’t.”

Practice Drifts. Infrastructure Holds.

A Hacker News commenter captured the concern precisely: “The effects of vibe coding destroy trust inside teams and orgs, between engineers.” The damage comes not from individual failures but from inconsistency. One developer follows the practice. Another does not. The codebase contains governed and ungoverned output that looks identical in a pull request.

Infrastructure eliminates this variance. When every agent session runs inside the same sandbox, uses the same constraint files, and passes through the same gates, the output quality has a floor. Individual developers can exceed the floor. They cannot go below it. Most Software Is Just CRUD. That’s Not the Problem. argued that the danger is cheap generation without constraint discipline. Infrastructure is how constraint discipline survives contact with a team of twenty developers, varying skill levels, and a Friday afternoon deadline.

The Treasure Data case study illustrates this concretely. They tried speed without structure first. Then they embedded governance into infrastructure, not policy documents. The result was one engineer shipping a production AI tool in an hour. The constraint was the accelerator. Governance infrastructure made them faster because it removed the decision overhead that slowed them down. Every developer on the team produced output that met the same quality bar because the quality bar was enforced by the system, not by individual judgment.

The Build Plan Still Works

The three artifacts from You Can Build This are the implementation of this distinction.

- Constraints are Ring 1.

- Agent definitions with tool allowlists and forbidden lists are Ring 2.

- Gates with measurable success criteria are Rings 3 and 4.

The sandbox makes containment physical. None of these require the developer to remember to be disciplined. They require the developer to define the constraint once and let the system enforce it on every run.

This is why the convergence matters. Agentic engineering identified the right practices. AgenticOps provides the infrastructure to make those practices the default. The industry does not need to choose between them. It needs both. The practice tells you what to do. The infrastructure ensures you actually do it.

I am glad the industry arrived at the same conclusion. The next question is whether they will build the infrastructure or stop at the practice.

Let’s talk about it.