40% Will Be Canceled. Not Because the Models Failed.

Post 3 of the AgenticOps series defined the six layers and four containment rings. This post maps Gartner’s projected cancellation drivers to specific gaps in that model.

The Comfortable Take Is Wrong

The take you keep seeing is that AI projects fail because the models are not ready. They hallucinate. They are unreliable. Wait for better models. Feel me? That is the played take. And it is distracting.

Gartner predicts more than 40% of agentic AI projects will be canceled or scaled back by 2027. The cited reasons are escalating costs, unclear business value, and inadequate risk controls.

None of those are model failures. GPT-5, Claude, Gemini will all be more capable in 2027 than they are today.

Real talk: the bottleneck is governance. Or more precisely, the absence of it.

73% of organizations are deploying AI tools right now. Only 7% govern them in real time.

That is a 66-point gap between deployment velocity and governance maturity. And that gap is exactly where the 40% lives.

80% of organizations report risky agent behaviors in production. 15% of daily work decisions will be made by agentic AI by 2028, up from essentially zero in 2024.

The industry is scaling deployment without scaling containment. Gartner’s 40% cancellation rate is not a prediction about models. It is a prediction about what happens when you run stochastic systems without structural boundaries.

Now let me give you a specific example of what is making this worse.

Of the thousands of companies now marketing “agentic AI” capabilities, roughly 130 are real. The rest are agent washing.

They are rebranding chatbots and workflow automations as agentic systems. Organizations buy those products, deploy something that does not need governance, and fail to build governance infrastructure. Then they deploy something that does need it. And discover they have nothing.

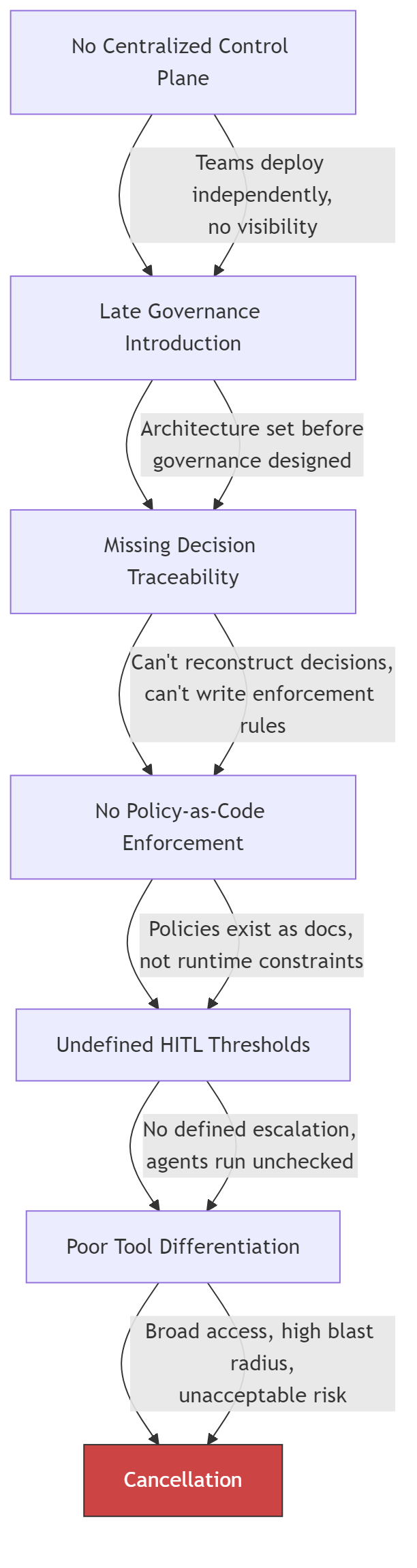

Six Failures That Compound

Accelirate analyzed agentic AI governance failures across enterprise deployments. They identified six structural problems. Every one is specific. Every one maps to a gap in the AgenticOps model.

The first failure is no centralized control plane. Teams deploy agents independently. No single system tracks which agents are running, what tools they can reach, or what decisions they make.

The second failure is late governance introduction. Teams build the agent, prove the demo, get funding, start scaling, then discover they need governance. By that point, retrofitting containment into a running system is harder than canceling the project.

The third failure is missing decision traceability. When something goes wrong, no one can reconstruct why the agent chose what it chose. The decision chain is invisible. Debugging becomes archaeology.

The fourth failure is no policy-as-code enforcement. Governance lives in documents. “Agents should not access production data.” But those policies are not enforced by the runtime. They are suggestions. And suggestions do not constrain systems that scale without warning.

The fifth failure is undefined human-in-the-loop thresholds. Everyone agrees humans should stay in the loop. No one defines when. What confidence score triggers escalation? What cost threshold pauses execution? Without thresholds, “human in the loop” is a policy statement with no implementation.

The sixth failure is poor tool differentiation. Agents get broad access because restricting tools is harder than granting them. The result is write access where there should be read access, credentials that should not be held, network reach that is not needed.

These do not happen independently. They cascade.

Each gap makes the next one harder to close. By the time an organization reaches the sixth failure, the cost of fixing the architecture exceeds the cost of canceling the project. That is Gartner’s 40%.

The Fix Is a Mapping Problem

I want to keep it real with you. The fix is not “add governance.” That sentence is vague enough to produce nothing.

The fix is mapping each failure to the specific layer or ring that prevents it, then building that layer before you need it.

| Governance Failure | AgenticOps Layer | Containment Ring | What Is Missing |

|---|---|---|---|

| No centralized control plane | Runtime Governance (L5) | Ring 2: Constrain Environment | A single registry for all running agents |

| Late governance introduction | Intent (L1) | Ring 1: Constrain Inputs | Governance requirements in the design, not the incident retro |

| Missing decision traceability | Evaluation (L3) | Ring 3: Validate Outputs | Structured logs with reasoning traces and state changes |

| No policy-as-code enforcement | Agent Generation (L2) | Ring 1: Constrain Inputs | Declarative policy files the runtime enforces |

| Undefined HITL thresholds | Promotion (L4) | Ring 4: Gate Promotion | Numeric thresholds for confidence, cost, and error rate |

| Poor tool differentiation | Agent Generation (L2) | Ring 1: Constrain Inputs | Per-agent tool allowlists, not shared credentials |

No driver is exotic. No driver requires a novel solution.

The structural components already exist in every governed agentic system that has reached production.

Stripe’s Minions architecture has all six solved. Devboxes are the control plane and environment constraint. Blueprints define governance at the intent layer. Every tool invocation is logged. Policy enforcement is structural, not advisory. Retry caps define explicit HITL thresholds. Toolshed provides curated, scoped tool access.

Stripe is not in the 40%. The structural reason is visible in the architecture.

Now look at the gap as a shape.

Every project in that gap is running agents without the infrastructure to govern them. Some will build the infrastructure before it matters. Most will not.

The Diagnostic

Map your project against these six questions. Where you have gaps, you have cancellation risk.

| Requirement | Question | Pass Criteria |

|---|---|---|

| Centralized control plane | Can you list every agent running in your organization right now? | Single registry with agent identity, status, tool access, and session history |

| Early governance | Were governance requirements defined before the first agent was deployed? | Containment boundaries in the design document, not the incident retrospective |

| Decision traceability | Can you reconstruct why an agent made a specific decision last Tuesday? | Structured logs with reasoning traces, tool call sequences, and state transitions |

| Policy-as-code | Are your agent policies enforced by the runtime or written in a wiki? | Declarative policy files that the agent cannot override or modify |

| HITL thresholds | At what confidence score does your agent escalate to a human? | Numeric thresholds for escalation, pause, and termination, enforced automatically |

| Tool scoping | Does each agent have access only to the tools required for its task? | Per-agent tool allowlists, not shared credentials with broad access |

Three or more gaps is a project at structural risk.

Five or more gaps matches the profile of the 40% that Gartner predicts will be canceled.

Six gaps is a demo, not a deployment. And that’s the way it is.

Let’s talk about it.

What AgenticOps Actually Looks Like

Autonomy Without Infrastructure Is Just a Demo

Gartner: More Than 40% of Agentic AI Projects to Be Canceled by 2027 (Gartner Symposium/ITxpo 2025)

Accelirate: Agentic AI Governance Challenges and Solutions (accelirate.com)