Tagged: agents

How Agents Stay in Bounds

The last post defined AgenticOps. Six layers from intent to knowledge compression. But I left the hardest question unanswered: how do you actually keep agents inside their boundaries?

The honest answer is you can’t guarantee it. Not the way you can prove a compiler respects a type system. A stochastic system doesn’t make promises. It makes outputs.

So the strategy isn’t trust. It’s defense in depth. Multiple layers of deterministic containment around a probabilistic process, so that no single failure leads to unbounded impact.

Boundaries Are Infrastructure, Not Policy

This is where AgenticOps stops being philosophy and becomes architecture.

The primitive is simple. One sandboxed container per agent slice. Docker Sandbox. Constrained file permissions. Whitelisted network access. A schema-constrained context mounted in at startup. The agent lives in that box. Everything it needs is in there. Everything it doesn’t need isn’t reachable.

That’s not a metaphor. The agent literally cannot write files outside its slice. It cannot reach endpoints that aren’t on the whitelist. It cannot promote its own changes up the chain. There’s no exception path, no override flag, no escape hatch.

The containment isn’t a rule the agent follows. It’s a wall the agent cannot see past.

I’ve said for years that in systems, people aren’t the problem, processes are. Most failures aren’t malicious. They’re structural. The system made the bad outcome easy and the good outcome hard. Humans being humans, they took the path of least resistance.

With stochastic agents, it’s the same insight one layer deeper. The problem isn’t the agent. The problem is the infrastructure that gives the agent room to fail in ways you can’t predict or recover from.

You can’t reason about agent output the way you reason about deterministic code. You can’t read the function and know what it’ll return. You can test it, eval it, constrain its inputs. But you cannot trust it the way you trust a compiler. It’s stochastic all the way down.

If you’re relying on the agent to follow a policy, you’re trusting a stochastic system to be trustworthy. That’s not a risk you’re managing. That’s a risk you’re ignoring.

A policy says don’t do this. Infrastructure says you can’t. When you’re governing stochastic systems, you want the second one everywhere you can get it. Policies are for humans who can read them. Infrastructure is for systems that can’t.

The Context Window Is a Containment Boundary

There are two actors in this model. An orchestrator that manages the lifecycle and an execution agent that does the work.

The orchestrator decides what the agent reasons about. If an agent is working on an order service slice, the orchestrator loads the order contract, the relevant state machine definition, the test expectations, and the bounded interface definitions for adjacent services into the agent’s context.

That’s it. Not the user service internals. Not the payment provider credentials. Not the global config.

The agent doesn’t decide what’s in scope. The orchestrator does. The context window becomes a containment boundary. The agent literally cannot reason about what it wasn’t given.

That gives you something powerful: the blast radius of a misbehaving agent is bounded by what the orchestrator mounted, not by the agent’s judgment. A bad output can only be as wrong as the scope allows.

If the scope is one contract and one set of tests, the worst case is a failed evaluation. If the scope is the entire system, the worst case is an invisible invariant violation three services deep. Scope is risk management.

Four Rings of Containment

I think about agent containment as four concentric rings. Each ring is deterministic. What’s inside them is stochastic. That asymmetry is the whole point.

Ring One: Constrain the Inputs

The agent only sees what it’s scoped to see. Typed schemas, versioned contracts, bounded context. The narrower the input scope, the smaller the space of possible outputs.

This is where most teams fail first. They hand AI an entire codebase and say “fix it,” then wonder why the output is unpredictable. An agent working on a single slice with a single contract has a fundamentally different risk profile than an agent with access to everything.

Ring Two: Constrain the Environment

The sandbox. No network access outside defined endpoints. Resource limits on CPU and memory. And a specific filesystem constraint that matters more than the others: the agent can read the broader system but can only write to the slice.

Docker volume mounts make this concrete. The repository mounts read-only. The slice directory mounts read-write. The operating system enforces it. The agent can see everything it needs to compile and resolve dependencies. It cannot modify anything outside its scope.

That distinction matters. The containment is write-scope, not visibility-scope. An agent that can only see its slice can’t build, can’t run tests, can’t verify its own work against real dependencies.

An agent that can see the system but only write to its slice can do all of those things. And the blast radius is still bounded by what it can change, not by what it can generate internally.

Builds produce artifacts outside the slice. Compiled outputs, temp files, package caches. Those writes happen in ephemeral directories that get discarded when the container stops. The only thing that survives the sandbox is the diff the orchestrator extracts from the slice directory.

Ring Three: Validate the Outputs

This is the evaluation layer. Before anything leaves the agent loop, it passes through deterministic gates. But not all gates are the same.

Static gates operate on files directly. Linting, AST validation, schema diff checks, security scanning. These work on the slice alone. They don’t need the broader system. They catch structural violations before anything compiles.

Build and test gates need more context. Contract tests, integration tests against bounded interfaces, compilation, snapshot comparison of API outputs. These work because Ring Two mounted the broader system as read-only.

The agent can build and test against the real dependency graph. It just can’t modify anything outside its scope.

The containment that matters here is not what the evaluation can see. It’s what survives extraction. The orchestrator collects only the diff from the slice directory. Build artifacts, test outputs, intermediate files, all discarded.

The evaluation runs against the full mounted context. The promotion pipeline sees only the slice-scoped changes.

That’s the honest version of “validate the outputs.” Some checks work on isolated files. Some checks need the system. Both run inside the sandbox. Neither requires the agent to have write access beyond the slice.

Ring Four: Gate the Promotion

The agent loop cannot self-promote. Period. Even if an agent produces something that passes every automated check, it does not reach production without human approval.

But what does the human actually review? Not the code. The evaluation pipeline already ran. What lands in the review queue is the evidence.

First, the human reviews the evaluation results. Which tests passed. Which contracts held. What the behavior diff looks like. API snapshots before and after. UI snapshots before and after. The evidence package tells you whether the system behaves as expected without reading a single line of generated code.

Second, the human checks scope. Did the agent touch only what it was supposed to touch? If the slice was the order service and the diff includes changes to the payment service, that’s a boundary violation.

You don’t need to read the implementation to catch that. You just need to see which files changed and whether those files belong to the slice.

Third, the human checks intent alignment. Does the behavior change match what was requested? Not “is the code clean” but “does the system do what I asked it to do.” That’s a contract question, not a code quality question.

Fourth, the human checks what machines can’t. Business judgment calls. Edge cases that require domain knowledge. Whether the thing that technically passes all gates is actually what a customer should experience. This is where human reasoning earns its place in the loop.

Fifth, the human verifies the running system. Deploy to a preview environment and test against the acceptance criteria. Does the change operate as expected when a real user touches it?

This is QA. It always was. The difference is the human is testing behavior that was generated and evaluated automatically, not behavior that was typed by hand.

That’s what code review becomes in an AgenticOps model. You stop reading code line by line. You start reviewing evidence, scope, intent, judgment, and behavior. The machines verify implementation. The human verifies outcomes.

Over time, as confidence grows, you might loosen this for certain categories of change. A low-risk schema migration that passes every gate, for example. But the default posture is closed. You earn openness through evidence.

Small Slices Make Containment Practical

There’s a principle underneath all four rings that makes them work. Scope the work small enough that boundary violations are obvious.

Small slices aren’t just a project management preference. They’re a containment strategy. The smaller the scope, the more deterministic the boundary, the more meaningful the evaluation, and the lower the stakes of getting it wrong.

What the Stack Looks Like

Put it all together and the concrete architecture looks like this.

The sequence in practice:

- The orchestrator creates the slice definition: contract, schema, test expectations, invariant list, and interface definitions for adjacent services.

- The orchestrator mounts the full repository read-only and the slice directory read-write into a sandboxed Docker container. No git CLI. No access to the remote repository. The agent can resolve dependencies and compile against the real system. It can only modify files in its slice.

- The execution agent generates against that context. Plans, scaffolds, implements, and refactors, all inside the sandbox. It reads broadly and writes narrowly.

- The evaluation pipeline runs inside the same sandbox. Static checks validate the slice files directly. Build and test checks compile and run against the full mounted context. Both enforce gates before anything leaves the container.

- If the output passes all gates, the orchestrator collects the diff, creates a branch, commits, and promotes to a human review queue with the evidence attached.

- If it does not pass, it loops back to the agent or fails out.

The execution agent never touches version control. Git operations are promotion, and promotion is outside the agent loop. The orchestrator handles branching, committing, and creating pull requests. The agent handles files.

The human never sees anything that didn’t survive the sandbox. The system never executes anything the human didn’t approve. The agent never touches anything outside its slice.

Anyone who has worked with parallel agent architectures knows this pattern is already emerging. Multiple instances against isolated issue slices, each with their own bounded context and evaluation gate.

I hope to build and experiment with this as we all learn to operate in our new AI reality. I plan on posting my results and findings in a new “AgenticOps Applied” series to share my experience.

Deterministic Boundaries Around Stochastic Processes

That’s the core design principle. Every previous abstraction step in programming was deterministic all the way down. This one isn’t. But it doesn’t need to be, as long as the containment layer is.

The agent is probabilistic. The sandbox is not. The evaluation is not. The promotion gate is not. The runtime telemetry is not. The human review is not.

The only thing that isn’t deterministic is the agent’s output. Everything else is a deterministic process that either makes it impossible for the agent to misbehave or makes it easy to detect when it does.

You don’t trust the agent to stay in bounds. You make it structurally impossible, or at minimum structurally detectable, when it doesn’t. And you scope the work small enough that detection is meaningful.

That’s how agents stay in bounds. Not by being trustworthy. By being contained.

Let’s talk about it.

Previous: [What AgenticOps Actually Looks Like]

What AgenticOps Actually Looks Like

Total understanding is a myth. Cheap generation without governance creates invisible debt. Those were the first two claims.

Now it’s time to make AgenticOps concrete. Not a vibe. Not “AI but responsibly.” A governance operating model for AI-amplified system production.

The Problem Is We’re Reviewing the Wrong Thing

AI can generate entire services, DTO layers, migrations, integration adapters, test scaffolding, refactors across modules. Generation bandwidth has exploded. Human review has not. And it won’t. Human review doesn’t scale linearly with generation speed.

That pain is real. You hear it even from people leading frontier AI labs. The bottleneck is no longer “who can write the code.” It’s “who can review all this safely.”

I believe the deeper issue is were reviewing the wrong thing. We’re reviewing lines of code. What we should be reviewing are outcomes. Does it satisfy the contract, pass the tests, respect the boundaries? AgenticOps makes structural review the default, not the exception.

The Failure Mode

The obvious failures aren’t the real danger. The real danger is slow structural drift. Behavior changing without anyone realizing the invariants were never encoded. Contract drift across services that only surfaces when two teams try to integrate. Feature interactions no one modeled because no one knew the features existed.

None of this announces itself. It accumulates. That’s the environment AgenticOps is designed for.

What Success Looks Like

Success in AgenticOps looks like this, humans define what the system must do and what must never break. Agents generate implementation inside explicit boundaries. Every change is evaluated automatically before promotion.

Runtime behavior is observable and reversible. Surface area growth does not increase risk proportionally.

Humans stop reviewing keystrokes. Instead they review contracts, invariants, risk surfaces, behavior diffs, telemetry, and business outcomes. Machines review implementation.

The Non-Negotiables

AgenticOps asserts a few invariants of its own. No generation without defined contracts. No promotion without evaluation. No runtime without observability. No agent autonomy without bounded scope. No hidden state transitions. No change without containment.

These are structural guarantees. They replace “LGTM” and rubber stamp reviews with real safety nets. They make it harder to accidentally introduce systemic risk through sloppy review.

The Layers

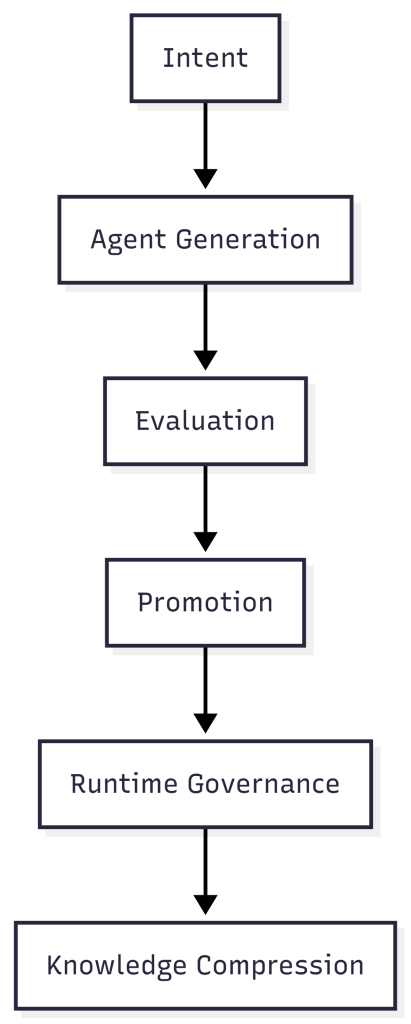

I think about AgenticOps as six layers. They build on each other.

Intent is defined by humans. System purpose, value flow, state machines, invariants, constraints, risk tolerance. This is not code. This is the contract space, the thing that must exist before any agent generates anything.

Intent is the only thing that survives. Implementations get replaced. There may be 100 ways to do a thing, but intent persists.

Agent generation is where agents plan, scaffold, implement, and refactor. But they only work inside typed schemas, versioned contracts, bounded environments, and isolated slices (an end to end unit of work that delivers usable value). No free-roaming generation.

Stochastic output is fine as long as it’s contained. The boundaries are what make it safe.

Evaluation happens before anything gets promoted. Contract tests, integration tests, property-based tests, static analysis, security checks, policy enforcement, snapshot diffs of UI and API outputs.

We don’t scale review. We scale evidence. Humans don’t inspect 1,000 lines of code. They inspect whether behavior is expected.

Promotion is the most important architectural decision in AgenticOps. The agent loop cannot self-promote. Changes move to a human approval queue where humans review what changed in behavior, not what changed in code.

Governance sits outside the agent loop, not inside it. Self-promotion is where entropy becomes systemic.

Every “AI automation” narrative that gets sloppy gets sloppy at the boundary between what agents decide and what reaches production. AgenticOps draws a hard line.

Runtime governance covers what happens after deployment. Metrics, tracing, logs, SLO tracking, anomaly detection, feature flags, rollback paths. Understanding becomes observational, not memorized.

If something breaks, I don’t reread code. I interrogate behavior.

Knowledge compression means every slice of work produces artifacts. Updated documentation, system summary, state transition diagrams, dependency maps, invariant lists, change logs.

I don’t try to hold the code in my head. I hold compressed models. The system generates its own documentation as a byproduct of the governance process, not as an afterthought someone writes six months later.

The Maturity Model

AgenticOps isn’t binary. It evolves.

- Level 0 is manual coding.

Humans write and review everything. This is where most of the industry lived for decades. - Level 1 is AI-assisted coding.

AI generates code. Humans still review line by line. This is where most teams are right now, and it’s where the pain is sharpest. Generation speed outpaces review capacity. - Level 2 is contract-first generation.

Humans define contracts. AI implements against them. Tests gate promotion. This is the minimum viable AgenticOps. - Level 3 is governed agent loops.

Slice queues, evaluation services, approval gates, containment enforced structurally. The governance isn’t a process someone follows. It’s built into the system. - Level 4 is observational governance.

Runtime telemetry feeds back into planning and constraint refinement. The system learns from its own behavior in production. - Level 5 is adaptive governance.

The system proposes constraint improvements within defined boundaries. Humans approve or reject. The governance loop itself becomes partially automated.

I suspect most teams today are somewhere between Level 1 and Level 2. Very few are at Level 3. That’s the gap.

The Model

Humans own outcomes. Agents produce implementation. The system enforces containment.

That’s AgenticOps. Six layers. Five maturity levels. A structural answer to how governance scales alongside generation.

Next, the hardest part. How you actually keep agents inside their boundaries when the thing generating the code is fundamentally probabilistic.

Let’s talk about it.

Previous: [Most Software Is Just CRUD. That’s Not the Problem.]

Next: [How Agents Stay in Bounds]