I’ve Never Fully Understood the Systems I Work In. AI Is Making That Worse.

I don’t know how many systems I’ve worked in without fully understanding how they work.

I’ve debugged production issues in codebases I’d never seen before. I’ve added features to systems that were built years before I showed up. I’ve built systems understanding how they are built but not why they are being built.

No documentation. No architecture diagrams. No one left on the team who could explain why that weird abstraction exists or what constraints shaped the original design. No context on the intent behind the technical debt I was inheriting. No explanation for why the system was overly complex.

I have built and maintained small systems, massive systems at scale, and in between. I rarely, if ever, had a complete understanding of any of them. I couldn’t hold them in my head. I couldn’t walk through them class by class, function by function, or explain them end to end with any real confidence.

Not because of some personal failing. Because complex systems are not memorizable. They never were.

AI Expands the Surface Area

AI can produce thousands of lines of code in a day. It can scaffold entire services, generate integrations, write tests, refactor modules. The surface area of what “exists” in a codebase is exploding. I’m going to know even less than I did before about what’s in these code repositories.

So the question I keep coming back to isn’t “how do I understand everything?” That was never realistic for me. The question is, how do I operate safely and effectively in systems I don’t fully understand, especially when AI is multiplying how much code exists?

Code is accumulating faster than any human can read it, and the abstraction layer I operate in is rising with it. The problem isn’t just bigger. It’s structurally different.

Total Understanding Was Always a Myth

I could never understand a complex system end to end. Not really. Especially once it crosses a certain threshold of complexity. What I understand are abstractions, the models, flows, boundaries, invariants.

Mechanical familiarity, reading and understanding every line, is not the same as structural comprehension. As a C# programmer you don’t read the IL the compiler emits. Not because the IL doesn’t matter, but because the compiler operates within constraints that make line-by-line review redundant. The language specification is the review.

Do we need to do a mechanical review of code generated by an agent?

A fair objection is a compiler is deterministic. Same input, same output, every time. An agent is stochastic. Same constraints can produce structurally different code on each run. But that variance isn’t new. Put three developers in separate rooms with the same requirements and you’ll get three different implementations. Different variable names, different control flow, different abstractions. The output was never deterministic. We dealt with that variance long before AI through code review, architecture, contracts, and automated checks and tests.

The best reviewers never reviewed code by expecting a specific implementation. They reviewed structurally: does it satisfy the contract, pass the tests, respect the boundaries? But plenty of code review was mechanical. Line by line, checking syntax, naming, style, catching things a linter should catch. That worked when the volume of code roughly matched the capacity to read it.

Agents break that balance. They produce more code than any human can efficiently read line by line. Mechanical code review doesn’t scale to agent-speed output. What replaces it isn’t less review. It’s a different kind of review. Instead of code review maybe we call it peer review or agent-assisted review with a focus on constraints, invariants, contracts, and structural correctness. The discipline that the best reviewers always practiced becomes the only viable approach.

What actually matters is: what value does a system produce? Who consumes it? What are the critical flows? Where are the boundaries? What must never break? How to increase maintainability, quality, security… value?

If I can explain how value moves through a system, I’m in control of how I move and operate in that system. If I can’t, I’m guessing. And I’ve done enough guessing with enough experience to make intuition look intentional. I hallucinated long before AI.

I Had to Stop Thinking Bottom-Up

For a long time my instinct when entering a new codebase was to start reading code. File by file, class by class. It felt productive. It wasn’t. I ended up with a pile of implementation details and no mental model to hang them on. I understood How without the Why.

The Why, the business purpose a system exists to serve, is the reason I was hired. The systems I worked in existed for a reason. Understanding the services that serve the users of the system maps to that purpose. Designing, building, and maintaining services is what I do, and the Why is the reason I do it.

Understanding moved top-down. Define the Northstar of the system and the purpose of its services. Map the user problem to the user experience through service interfaces and the contract for inputs and outputs.

Identify state transitions and data flows. Understand the dependencies. Clarify the invariants, the things that must always be true for the system and services to function.

Only then do I care about how specific classes or functions are implemented.

If I can’t sketch a system or service on a whiteboard in five minutes, I don’t understand it yet. Doesn’t matter how many files I’ve written or read. I am hired to support the Why above the code.

With AI Agents, My Role Changes

Today, I’m not the typist, the writer of code, that focuses on the How. I’m the operator of AI agents. I deliver the Why by designing and evaluating the How driven by agents.

Uncle Bob Martin made a sharp observation in his book We, Programmers. He traces how the word “code” comes from Grace Hopper’s team programming the Harvard Mark I. “Code” referred to the numbers they wrote on paper representing hole positions on 24-bit paper tape.

Hopper spent the rest of her career trying to get away from code, trying to move toward more natural languages. Eighty years later, we still call our programs “code.” Uncle Bob calls that a reflection of our failure to meet her goal.

He frames the AI question as a binary. Is AI just the next compiler, translating higher-level code to lower-level code? Or is it what Hopper envisioned, something where prompts aren’t code at all, but natural language negotiations and the realization of Hopper’s goal?

It’s a good question. But I wonder if compiler-vs-negotiation is the real axis.

I view it more as deterministic vs stochastic.

When AI scaffolds a CRUD service from a schema, the output is predictable and verifiable. The task has a narrow solution space with deterministic input, clear schema, clear constraints, predictable output. You can inspect it and trust it roughly the way you’d trust a compiler.

When AI reasons through edge cases, infers business intent, or makes architectural judgment calls, that’s probabilistic. Ambiguous intent, competing constraints, tradeoffs that require iterative refinement. The output isn’t necessarily wrong, but it’s sampled a next token guess. Run it again and you might get a different answer.

And the confidence surface is invisible. There’s no compiler warning when AI makes a plausible-but-wrong architectural choice. Hallucinations don’t have an error code.

The mistake is using stochastic reasoning to produce deterministic system surfaces without verification.

A contract. An interface. A migration. A security boundary. These become load-bearing the moment they exist. The system doesn’t know or care that the thing defining its behavior was probabilistically generated. It executes it as truth.

This is the gap. AI can generate implementation, the How. What it can’t generate are constraints, architectural boundaries, risk surfaces, and operational discipline. Yes it can write words that appear to be constraints, but I can’t delegate my responsibility for them, even if I let AI do most of the writing.

What I allow to go into production is on me, no one else. It’s on me to make sure that anything AI generates probabilistically gets verified before it hardens into something a production system treats as fact.

If AI writes 10,000 lines of code and I haven’t defined the contracts, the interfaces, the performance expectations, the security constraints, the observability requirements, and the test surfaces, then I’ve let dice rolls become load-bearing walls in the system.

AI doesn’t remove architectural responsibility. It amplifies it.

I Don’t Scale Understanding. I Scale Containment.

I’m not trying to know everything. I gave up on that a long time ago. What I’m trying to do is design systems where I don’t have to know everything.

That means clear interfaces. Explicit schemas. Strict typing. Unit tests, contract tests, integration tests, security and performance tests. Tracing and metrics. Logs that actually tell me something useful when things go sideways.

If something breaks, I don’t rely on memory. I rely on instrumentation. Understanding becomes observational, not memorized.

I can’t hold 200,000 lines of code in my head. But I’ll hold onto a one-page system summary, a lifecycle map, a state machine diagram, a list of invariants, a list of “what must never happen,” and a dependency diagram.

Those are the compression artifacts I’ll actually carry around. Not the implementation. The constraints that govern it.

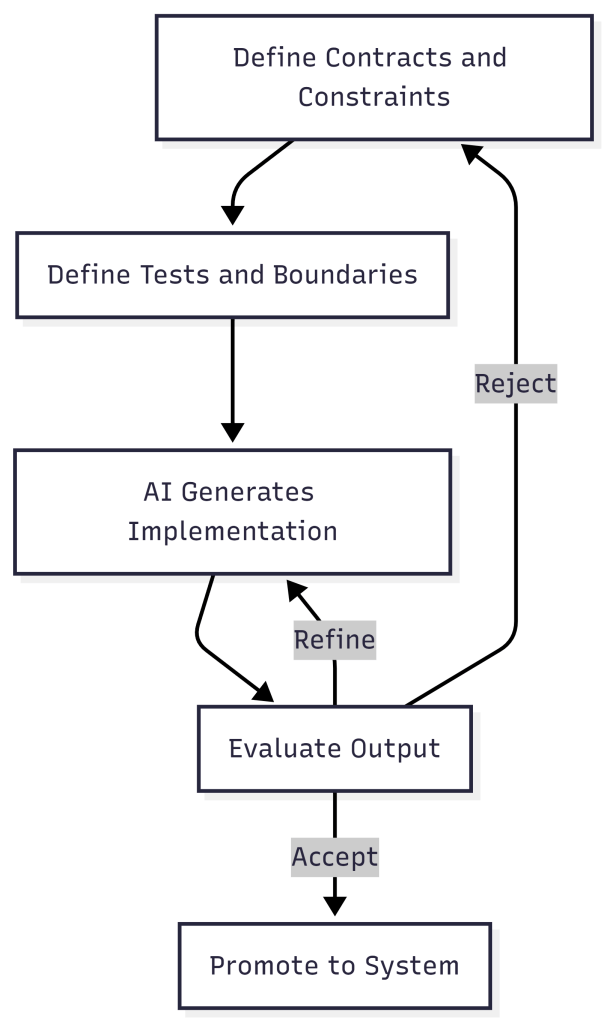

And with AI generating code faster than I can read it, constraint-first is the only sane approach. Define the contract. Define the tests. Define the boundaries. Then let AI implement. Evaluate the result. Accept, reject, or refine. Loop until convergence.

That loop is the verification layer. It catches stochastic output before it becomes deterministic system behavior. Without it, AI-generated systems turn into unbounded complexity farms.

When I design and build, I optimize for maintainability and low mean time to value. Containment is how I get there.

The Real Shift

I believe the industry is moving from “I understand every line of code” to “I understand the boundaries, constraints, and risk surfaces.” Some might call that a loss of craftsmanship. I think it’s evolution.

The skill isn’t omniscience. It’s navigational confidence. Can I enter a foreign system, form a hypothesis, test it safely, reduce the blast radius, and improve it incrementally? If yes, I’m fine. AI doesn’t change that. It just increases the speed at which I can do it.

I don’t think software development has ever been about typing in a coding language. To me it’s about shaping constraints around state.

The job is transcending up a layer of abstraction, operating teams of AI agents and governing the boundary between what AI generates probabilistically and what systems execute deterministically. The code, whether I wrote it or AI did, is an artifact of my decisions. And my decisions are what matter.

That’s the shift I’m building around. And I call it AgenticOps.

Let’s talk about it.

3 comments